You have /5 articles left.

Sign up for a free account or log in.

Istockphoto.com/simonapilolla

The end of the school year is a time to reflect on accomplishments and -- ideally -- to abandon regrets, or at least learn from them.

Last September, professors from institutions around the country shared with “Inside Digital Learning” their plans for new classroom initiatives and made some predictions about what they might accomplish. We came back to them recently to ask how they went. A few said they're too busy with an end-of-year crush of grading to reply. Here's what the rest of them reported back.

Sandi Connelly, leader faculty in online learning in science, Rochester Institute of Technology

What she tried: Incorporating Microsoft speech-recognition software and a new learning interface, with the goal of keeping students more engaged.

What worked well: As with all new implementation, there are hurdles. Both Microsoft Translator and JoVE Core Bio resources were embraced by the students. In the face-to-face classes, all students benefit from engaging with MS Translator. Many save the transcript, and when coupled with lecture-capture technology, MS Translator has eliminated the “one and done” of lectures, giving students more opportunity to succeed. Online, MS Translator allows me to have real-time office hours with all students, taking away the need to wait for a captionist to join us. This allows me to be more accessible to them.

With JoVE Core Bio, the students have been engaging with content through the eyes of scientists. The integration of scientific articles directly with the fundamental content affords students the opportunity to learn in different ways -- study the fundamentals and then apply their knowledge, or read the articles to understand the why and then work through the content to understand the how. This has been a very important change to engage my nonmajor students in the courses and help them to approach biology more holistically.

With JoVE Core Bio, the students have been engaging with content through the eyes of scientists. The integration of scientific articles directly with the fundamental content affords students the opportunity to learn in different ways -- study the fundamentals and then apply their knowledge, or read the articles to understand the why and then work through the content to understand the how. This has been a very important change to engage my nonmajor students in the courses and help them to approach biology more holistically.

I have seen approximately a 26 percent average increase in the time spent engaged with materials after adding JoVE Core to my classes. While I would like to see even more students engage with the materials, this is not an insignificant change and has led, directly or indirectly, to higher exam averages this term.

What didn't work well: With Microsoft Translator, you are dependent on a reliable internet connection! A LAN connection is important for integration with the reference library for my courses. This can be cumbersome, as many institutions are phasing out LAN connections. Further, lost connections stop the captioning, and waiting for re-establishment significantly impacts the cadence of a lecture.

As JoVE is in beta, though building rapidly, there are a limited number of practice questions available. For many faculty, self-check quizzes and homeworks are not something for which they want to spend time writing questions. As the database and teaching resources for JoVE Core continue to increase, this will be a fantastic classroom resource at an affordable price.

What she learned: Students do not want to have to bounce between resources for class content. Minimizing the “traffic congestion” will help everyone keep tabs on the successes and challenges faced but will also make the logistical learning curve smaller -- improving the feeling for students that they can handle the course and ultimately be successful.

Anna Gold, university librarian, Worcester Polytechnic Institute, and Lori Ostapowicz Critz, associate director for library academic strategies, Worcester Polytechnic Institute

Anna Gold, university librarian, Worcester Polytechnic Institute, and Lori Ostapowicz Critz, associate director for library academic strategies, Worcester Polytechnic Institute

What they tried: Opened a new digital scholarship lab centered on project-based learning.

What worked well: Since its opening, the Shuster Lab for Digital Scholarship has become a popular home for informal and course-related digital scholarship. It is a daily destination for project meetings, study groups and presentation practice sessions, as well as for individuals needing the equipment or specialized software. Our instruction librarians routinely meet students in the lab to conduct research consultations and to teach course-embedded information literacy classes.

The lab has inspired several successful new initiatives, including a series of digital scholarship workshops on digital exhibits/collections fundamentals, mapping, text mining and analysis, and text analysis. The workshops provide an introduction to the topics, along with an invitation to attendees to seek one-on-one assistance on individual projects. Relationships have been fostered with several faculty members and students.

The WPI Digital Volunteers group also initiated a monthly event series to bring the WPI community together to contribute to digital crowdsourced projects with a global impact. The series launched with a Wikipedia editathon for Native American Heritage Month, and has included a Black History transcribeathon, Humanitarian mapathons, hackathons, and other targeted editathons. Events have been co-sponsored by several student organizations and draw attention to global projects and how to support them digitally.

Perhaps the most significant activity in the Shuster Lab was a humanities inquiry seminar taught by associate teaching professor Joe Cullon, titled Urban Digital History -- Parks, People and Politics in Worcester, Mass. This seminar guided students through the use of recent digital history applications, such as Omeka and Juxtapose, and the creation of a complete digital history site. The course helped illustrate the potential of the lab to support research and learning in digital scholarship and opportunities for the Gordon Library to provide assistance and guidance to students and scholars in these endeavors.

Perhaps the most significant activity in the Shuster Lab was a humanities inquiry seminar taught by associate teaching professor Joe Cullon, titled Urban Digital History -- Parks, People and Politics in Worcester, Mass. This seminar guided students through the use of recent digital history applications, such as Omeka and Juxtapose, and the creation of a complete digital history site. The course helped illustrate the potential of the lab to support research and learning in digital scholarship and opportunities for the Gordon Library to provide assistance and guidance to students and scholars in these endeavors.

What didn't work well: During this first year, attendance for activities was generally low. Students and faculty are very busy during our fast-paced, intense seven-week terms, and it is difficult to anticipate the optimal timing for these add-on activities.

Integration of the Shuster Lab into the curriculum has been somewhat slow, although Professor Cullon’s class gave us a chance to pilot the use of the lab across a full term and shines a light on the lab as planning for the next academic year gets underway.

What they learned: We are going to use targeted marketing and a personalized approach to identify classes each term that could benefit from utilizing the equipment and/or digital scholarship services available.

Elizabeth Howard, program manager of student affairs, New Mexico State University College of Engineering

Elizabeth Howard, program manager of student affairs, New Mexico State University College of Engineering

What she did: Remodeled an existing classroom space into an active learning environment, via a Steelcase grant.

What worked well: The Active Learning Classroom has been a wonderful asset to the College of Engineering at New Mexico State University. The classroom was used for Introduction to Engineering course and Engineering Statics and Dynamics with a total of 18 sections across two semesters. The professors utilized the active learning environment to conduct mini impromptu design challenges and student collaboration/teamwork. The professors used the mini whiteboards to have students work in teams on assignments in class, which created an environment for students to interact with one another. The classroom really encouraged group learning and communication. Students that typically would have sat in the back of a traditional lecture room were in the middle of a group activity at their table. This increased their ability to problem solve quickly and communicate with one another.

What didn't work well: One of the constraints of the classroom was the actual size. The setup is for 32 students, but due to student mentors in the class, it was difficult to quickly change the setup of the classroom. Ideally the classroom would be arranged differently depending on the course curriculum for the day. The classroom stayed a similar layout due to convenience for the instructors.

What she learned: Utilizing the Active Learning Classroom proved to the College of Engineering and professors that this type of learning environment is conducive to learning. The student and professor feedback was positive -- both faculty and students are hopeful that additional classrooms in the College of Engineering would model a similar layout. Due to the positive feedback in the fall, we remodeled another classroom to follow a similar layout, and it was completed for the spring semester. Based on classroom observations, the most impact the classroom had was increasing communication among classmates and creating a sense of team among the different groups.

Vera Kennedy, sociology and education instructor, West Hills Community College Lemoore

Vera Kennedy, sociology and education instructor, West Hills Community College Lemoore

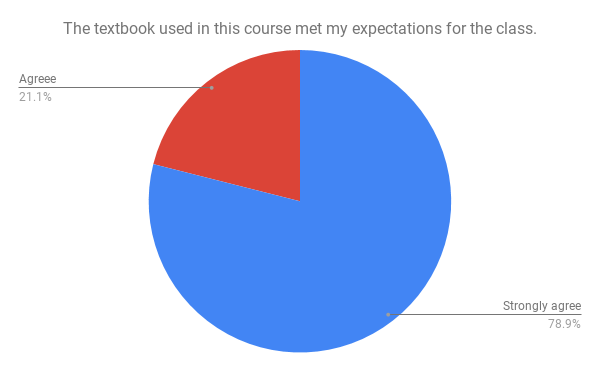

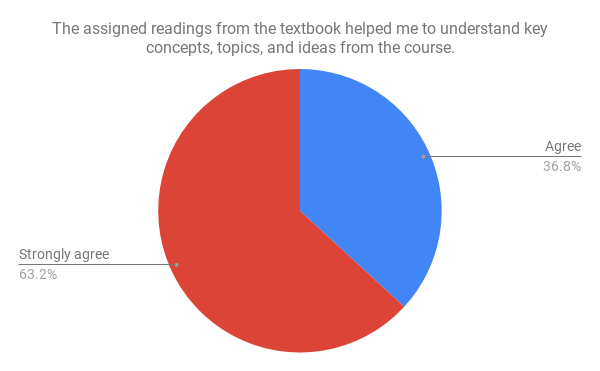

What she tried: Incorporating a new self-authored OER textbook into her cultural sociology class.

What worked well: All students were able to access the textbook on multiple devices (i.e., desktop, laptop, tablet and cellphone). Students reported the OER textbook met their expectations and the assigned readings from the book helped them understand key concepts, topics and ideas from the course. Some student comments included:

- “I think providing a free online book is a great idea because then students don’t have that as an excuse."

- “I feel like I'm leaving being a lot more confident in who I am and my culture. I think the activities in class really helped us further understand all the topics. Over all, I just really enjoyed and appreciated this class.”

- “The textbook was well put together and all of the assignments in the class reflected what the textbook taught us. The interactive assignments made the readings easier to understand when we were given applications to the concepts.”

- “The book was great because it was straight to the point; it was descriptive but not too long to bore anyone.”

What didn’t work well: Students expressed challenges in understanding some of the terms. One student explained, “I think the lectures in class could incorporate the actual terminology we learned in the reading more thoroughly.” Another student said, “I think there was a lack of explicit definitions for some terms. I never thought I'd say this, but I think it may just have been a bit too short and concise.” Others stated learning to apply the concepts in real world situations was difficult. Finally, some students found the inability to annotate the text inhibited its functionality as an OER material. One student shared, “The only thing I could not do was highlight certain areas.”

What she learned: I need to expand the definition of terms with more than one example pertaining to the subject criteria. I also should cover a wider range of topics related to the course material in my examples in the text, lectures and applications. Lastly, I most recently discovered a new application for faculty and students to upload and annotate commercial and OER texts called Perusall. I am test piloting this annotation tool in a couple of my OER courses this semester and am working on refining its use and application in my class for the coming fall.

Ruben Mancha, assistant professor of information systems, Babson College

Ruben Mancha, assistant professor of information systems, Babson College

What he tried: Adding technology kits and other experiential elements to connect students to information technology subject matter.

What worked well: Students enjoyed the fast-paced course dynamics: a combination of short lectures, brief reading discussions and teamwork to build Internet of Things (IoT) prototypes. They gained hands-on experience programming IoT/wearables, managing teams following Agile principles, designing digital interfaces, conceiving business and revenue models for their technology, and presenting their innovations and start-ups to a panel of judges, which included Michael Sullivan from Verizon Innovation Labs and Cheryl Kiser, Emily Weiner and Ken Freitas from Babson’s Lewis Institute.

The partnership with Verizon through the Lewis Institute’s IoT for Good initiative brought real challenges and purpose to the course. Prototypes built by student teams included IdBracelet, a wearable using blockchain technology to offer identity-management services to refugees; meQ, a digital platform business that helps companies monitor and optimize their employees’ stress levels; and Farmer Bob, a nonprofit that offers IoT solutions and data analytics to optimize yield in small farms.

What didn’t work well: In an intensive elective, students have to learn many technical concepts in a short period. The course would benefit from additional in-class time to cover the basics of IoT technologies and their programming and to explore in more detail the connection between physical devices and digital interfaces (e.g., apps, dashboards).

What he learned: The collaboration with industry is an essential part of this course, and I intend to maintain it. Next year, the course will span seven weeks, and students will have more time to explore the basics of programming and the design of digital interfaces.

Robert Meeds, associate professor of communications, California State University at Fullerton

Robert Meeds, associate professor of communications, California State University at Fullerton

What he tried: Transformed Digital Foundations course from face-to-face elective to online requirement.

What worked well: Creating a YouTube Channel (COMM 317) that functions as an online, easy-to-access content library worked very well. It took a lot of work to create and organize the more than 125 videos we created for this course, but student feedback about the video content has been really positive. Students also like the flexibility of accessing the lecture and tutorial content when they want to. Though it took a while for the instructors as well as the students to acclimate to the mostly online format, putting all the instructional content online and then using brief 50-minute in-person lab sessions worked well to reinforce key points and review student progress.

What didn’t work well: When students are learning in a traditional classroom or lab setting, they have opportunities to help each other and learn from each other. We’ve lost some of those kinds of interactions under the new format. Also, each of the three course instructors reported that figuring out how best to use those 50 minutes we had in person with the students each week proved to be a challenge. In general, we all tried to cram too much into those 50 minutes in the early going. From a technical perspective, we experienced challenges that are common to other online courses, such as the university’s LMS file size limits that forced us to create a lot of workarounds for students to get their assignments to us. This made grading cumbersome at times. And, as you’d expect, there were some students whose computers weren’t up to running the required software.

What he learned: Students complete five major assignments as well as some short lab assignments during the semester. We learned that if we break up the major assignments into smaller parts and give students concise, timely feedback on their work in the smaller parts, it’s a workable alternative to face-to-face contact and they can often make adjustments to improve their work before the full assignment is due. We also learned to be more realistic about what we can accomplish in our 50-minute lab sessions and to really focus on making sure each student gets a little bit of our attention and support each week.

Peggy Semingson, associate professor of curriculum and instruction, University of Texas at Arlington

Peggy Semingson, associate professor of curriculum and instruction, University of Texas at Arlington

What she tried: Implemented predictive analytics in an attempt to anticipate students' needs.

What worked well: I’ve been steadily using the Inspire for Faculty tool from Civitas Learning for all of my online courses. Previously I used it to send fairly generic nudge emails to students based on the predictive analytics data. These emails were primarily sent out to students based on their success and participation levels in the class. I have since learned that within the software there are email templates that are particular to other factors such as point in the semester. So, what has been going well is I have moved from crafting my own emails to using the templates and encouraging language provided by Civitas and then tweaking the emails based on the other variables. I have also started using the software tool to send and track messages apart from the data itself, so using it as a messaging tool in and of itself. Some people may not like email templates, but I find them to be very strategic, and I don’t have to start from scratch when thinking of what to say. Students continue to be encouraged, generally speaking, by the nudge emails.

What didn’t work well: I still don’t have a calendar or overall strategic approach for when to send out the emails, and because I don’t hear from too many other faculty, I don’t have a very systematic plan for using it. It’s easy to get sidetracked from using a new tool when you are one of the few people using it.

What they learned: I have learned, as the old saying goes, to not reinvent the wheel in terms of crafting nudge emails from scratch. There is a wealth of information in the Civitas software that supports faculty as they craft emails. What I need is an overall strategic approach and especially a timeline for when to send out these emails and then how to track them. Another important need is to talk to other faculty about how they are using the tool. I don’t see the formal infrastructure for doing that with this particular tool, so I have my own informal back channels for talking to other faculty about new digital ideas. It’s still a new and emerging tool. We need to carve out spaces for conversation about specific ideas.

Parrish Waters, assistant professor of biology, University of Mary Washington

Parrish Waters, assistant professor of biology, University of Mary Washington

What he tried: Encouraging student engagement with help from a formative assessment tool, which he'd used in the classroom previously, but without a cohesive strategy.

What worked well: The formative assessment approaches I’ve experimented with over the last several years have changed the way that I approach my teaching. I was able to get a consistent 50 percent interaction rate with the online formative feedback tool BluePulse this year. This was a slight increase compared to previous years, and though small, I consider it a success. Students offered critical and informative feedback on both my teaching style (the pace and complexity of my lectures) and new assignments I incorporated into my course (some were winners, other losers). My interactions with students have become more honest, and they more comfortably offer frank feedback that helps move my teaching forward.

What didn’t work well: While my students seemed more engaged with the material, their grades this year were not significantly different from past years -- nearly identical in average and spread. I do not see this as a failure. Students' use of this tool was implicitly valuable, both for me to receive the feedback and for them to feel empowered by giving it. Although my efforts to standardize the polls and increase its use had no direct effect on the students’ grades, their engagement with BluePulse added to their sense of ownership over their education in this class, which motivated them to engage more with the material. This helps them retain and recall the large amount of information conveyed in this course much more than rote memorization.

What they learned: Using formative feedback tools allows me to get immediate, sincere and critical feedback on my teaching practices, more so than just asking students in person. For instance, I used brief, ungraded pop quizzes during the last 10 minutes of my class this semester. Students traded quizzes every few questions, grading and discussing questions as they went, before moving on to the next set of questions. I had never used this teaching strategy before and received such overwhelmingly positive feedback through BluePulse after each iteration that it is now a mainstay of my pedagogy. Formative feedback allows me to comfortably test-drive new and innovative pedagogy in my classroom. The candor of the dialogue gives students an active role in our pedagogical experiment.