You have /5 articles left.

Sign up for a free account or log in.

As Turnitin seeks a “realignment” of what it does, the company best known for its plagiarism detection software hopes it can warm more faculty members to the idea of using technology in writing instruction.

Turnitin in 2014 acquired LightSide Labs, a Carnegie Mellon University-based start-up. On Wednesday, Turnitin launched a rebranded version of the start-up’s Revision Assistant, an online writing tool that uses machine learning to tutor students as they draft their essays.

Elijah Mayfield, who co-founded LightSide Labs and now serves as Turnitin’s vice president of new technologies, said Turnitin approached the start-up as part of a greater “realignment of the core focus of the company.”

Turnitin, Mayfield said in an interview, told him the company wanted to move away from the “administrative challenges with writing” -- in other words, plagiarism -- and be more of a “teacher’s aide.”

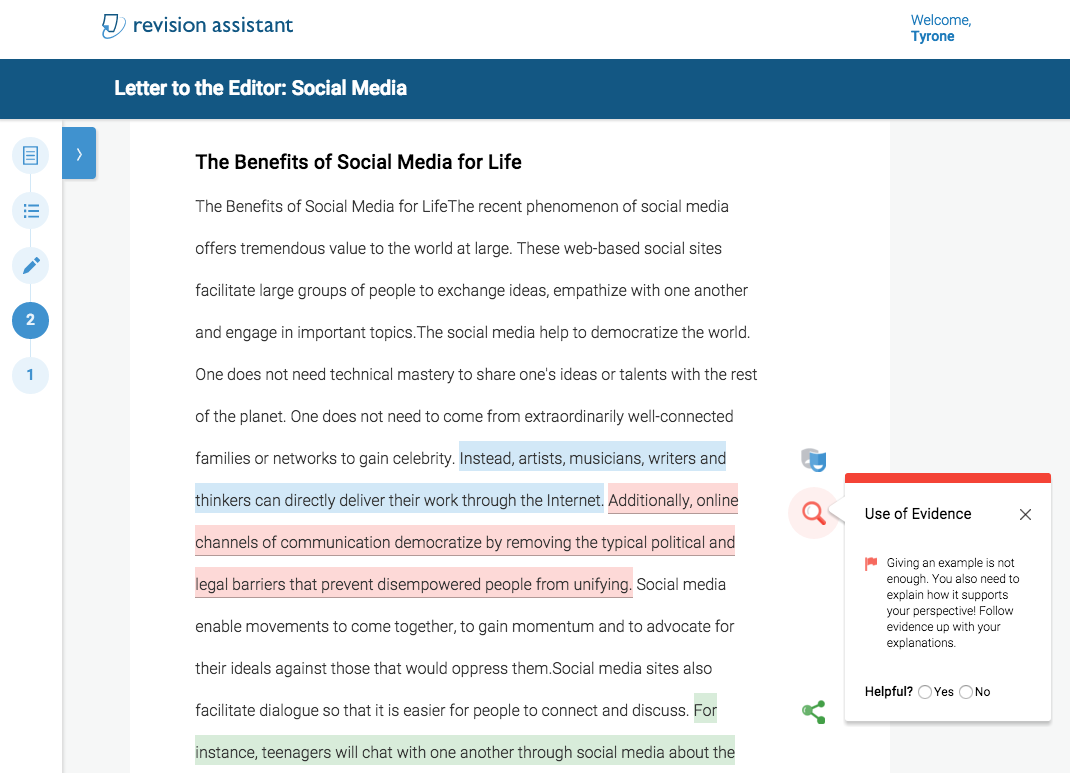

Revision Assistant is a departure from Turnitin’s other products, which focus on evaluating students’ writing after it is turned in. Revision Assistant doesn’t grade students on their writing or check for plagiarism, but gives them feedback in four areas -- language, focus, organization and evidence -- as they write. The feedback can be either positive or negative. For example, the software might remind students that they should use evidence to support their thesis, or compliment those who it detects use clear and precise language.

The software, which is aimed at students in grades six through 12 and introductory college writing courses, has been piloted at a handful of middle and high schools, and also in developmental writing courses at institutions such as Dalton State College, in Georgia.

Jenny Crisp, associate professor of English, said Dalton State has used the software as an additional layer of support. When students are not in the classroom, she said, they can log into Dalton State’s learning management system and start prewriting and drafting in Revision Assistant. Once they come to class, the software may already have answered some of their most fundamental questions about writing, meaning they can talk about more advanced topics with a writing instructor, she said.

“It’s good for students that write at 3 o’clock in the morning,” Crisp said in an interview. “They see things change. They see progress made. They just write more, and with beginning writers, frankly, just writing more makes the biggest difference of anything I’ve ever seen.”

There are limitations to the software, however. Writing is restricted to responses to prompts, 40 of which will be available at launch. More will be made available as Turnitin works with school districts and community colleges that are interested in turning their curricula into writing prompts, Mayfield said.

Examples of prompts include writing about “a time that laughter featured prominently in their lives” and “the pros and cons of social media,” Crisp said. Since the software is primarily aimed at K-12 students, several of the prompts are framed as responses to pieces of writing.

Revision Assistant already faces an uphill climb among writing instructors, many of whom philosophically object to turning writing into an activity that can be evaluated by a machine. The National Council of Teachers of English in 2013 issued a position paper on that topic, naming prompt-based writing as one of the organization’s specific concerns. Requiring students to follow strict prompts, the paper argues, “[reduces] the incentive for teachers to develop innovative and creative occasions for writing, even for assessment.”

In a statement, members of the NCTE’s assessment task force said “the ways in which humans and machines analyze writing continue to serve very different outcomes.” The task force members are also involved in the Conference on College Composition and Communication, the NCTE’s professional organization for writing instructors.

“As is the case in K-12 classrooms, teaching writing at the college level that is successful calls for thoughtful response to student writing,” the members said in the statement, naming face-to-face conferences with instructors and feedback on drafts as two examples of responses. “Such human formative assessments are essential building blocks supporting writers' development.”

Even Crisp, who on the whole was satisfied with Revision Assistant, said she “would not trust [it] to grade a student essay.” Turnitin doesn't intend for the software to serve that purpose, either. Revision Assistant doesn’t come with a traditional grading feature, but gives students a score of one to four in each of the four categories. Turnitin calls those scores “signal checks,” since they resemble wireless signal strength logos.

“I don’t want to say ‘never,’ but I’m not sure it can ever replace a human instructor,” Crisp said. “If [students] spend some time with Revision Assistant, they’ll remember that they have to have a hook, that they have to have transitions. Then I can start helping them with the things the computer can’t.”

Mayfield called the skepticism about technology in writing instruction a “valid concern,” and said technology has not yet reached a point where it can be used in upper-level courses teaching advanced, open-ended writing.

“There is room for technology to help that conversation, but it’s not the most crucial place to have technology insert itself right now,” Mayfield said. “We don’t think of [Revision Assistant] as something for upper-level electives where students are able to engage with teachers in a strong dialogue. We see it as empowerment of students who don’t have those skills already.”