You have /5 articles left.

Sign up for a free account or log in.

Academics publishing in particular fields of chemistry or neuroscience are virtually guaranteed to be cited after five years, but more than three-quarters of papers in literary theory or the performing arts will still be waiting for a single citation.

These vast differences in the rates of work going uncited in different disciplines have emerged from an analysis of bibliometric data from Elsevier’s Scopus database by Billy Wong of Times Higher Education’s data team.

These vast differences in the rates of work going uncited in different disciplines have emerged from an analysis of bibliometric data from Elsevier’s Scopus database by Billy Wong of Times Higher Education’s data team.

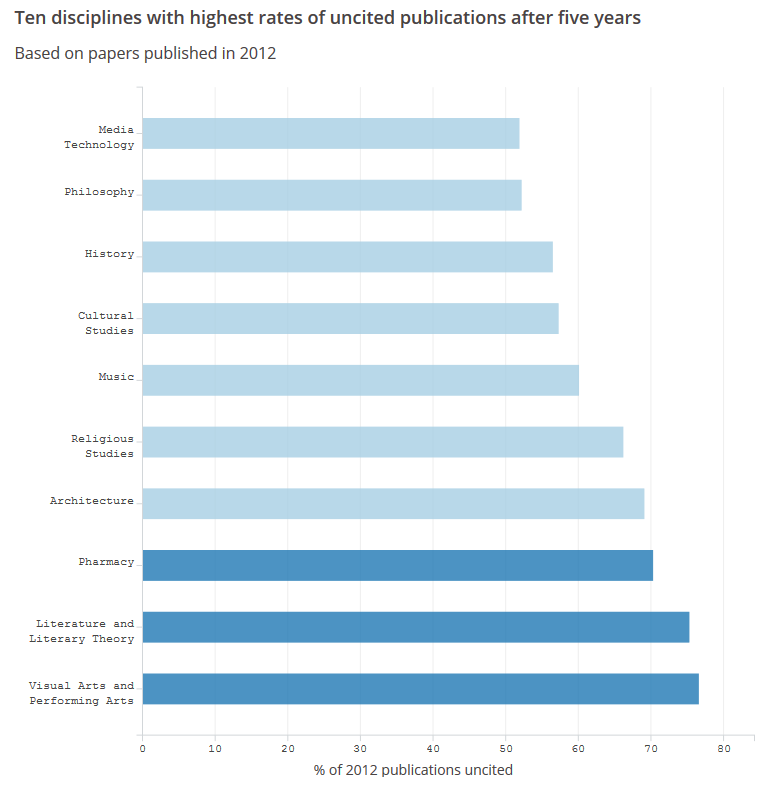

According to the analysis, which looked at disciplines in which at least 10,000 pieces of research were published between 2012 and 2016, almost 77 percent of publications from 2012 in the visual and performing arts were still uncited by 2017.

In literature and literary theory, the share was 75 percent, while in the professional health area of pharmacy (rather than pharmaceutical research) it was 70 percent, and in architecture it was 69 percent.

Most of the subjects with the highest rates of uncited research over the period were in the arts and humanities, with major disciplines such as philosophy and history having more than half of research without a single citation several years later.

However, some science, technology, engineering and math subjects also had relatively high rates of uncited work: in industrial and manufacturing engineering, for instance, 44 percent of 2012 publications were still uncited, while automotive, aerospace and ocean engineering all had uncited rates above 40 percent.

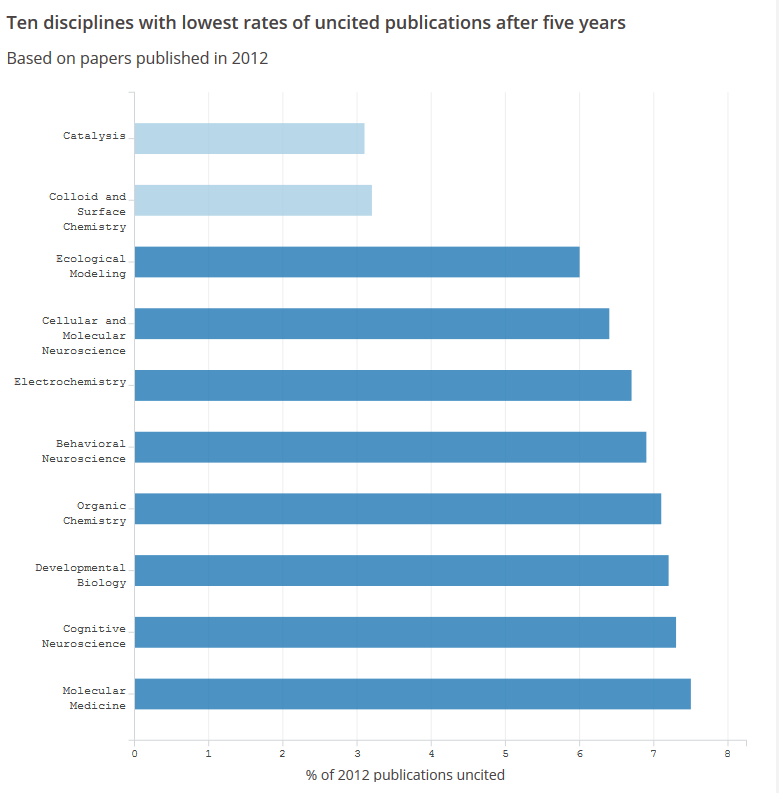

At the other end of the scale, just 3 percent of 2012 papers published in the catalysis subfield of chemical engineering or in colloid and surface chemistry were still uncited at the end of the period. In these fields, almost half of scholarship published in 2016 had already garnered a citation.

For researchers in different disciplines, the huge variation simply demonstrates how citation culture can differ between subjects, rather than being evidence that there is a problem with the quality of research in certain fields.

Marco Caracciolo, assistant professor of English and literary theory at Ghent University, who received the most citations in the subject between 2012 and 2016, according to Scopus data, said that the reasons behind the high share of uncited work in the discipline were “likely to be quite complex.”

For instance, monographs and book chapters “carry a lot of weight in this area of the humanities” and it was much more likely that these -- rather than any journal article that first expressed an idea -- would be cited.

“The general expectation is that articles pave the way for monographs, which will contain the ‘final’ version of an argument -- not the other way around,” said Caracciolo.

He added that the citation culture was also different for scholars on the more theoretical side of literary theory. Here, citation “works by signaling affiliation with a certain movement or theoretical trend.”

“Scholars position their approach not through a comprehensive literature review but by way of strategic citations -- which may result in a relatively small number of highly influential publications (typically in book form) receiving the vast majority of citations,” Caracciolo said.

“This is quite different from what happens in the sciences, where the logic would appear to be more incremental,” he said, adding that his own citation rate could be higher because of his primary field of narrative theory having “a more science-like logic.”

Harriet Barnes, head of higher education policy at the British Academy, also emphasized that “different disciplines will publish and cite research in different ways.”

She said that “for many disciplines in the humanities and social sciences, five years is not enough time to capture the use and impact of research. Some research will have a very long shelf life and continue to have considerable impact for 10 years or more.”

Of course, low-quality research still may exist. The results of peer-review exercises such as the research excellence framework, for instance, suggest that plenty of scholarship is deemed to be of a lower standard.

However, academics question the usefulness of uncited rates as a way to measure quality between disciplines. Even in STEM subjects, the rate of uncited work may be influenced by discipline-specific factors.

Frede Blaabjerg, a highly cited academic in the field of industrial and manufacturing engineering and a professor at Aalborg University in Denmark, said that in engineering there was often a “focus on making artifacts, detailed testing and also bringing that into real application -- that takes time and publication is not first priority.”

Different subdisciplines of engineering were also quite narrow, he added, meaning that there may be a “relatively low volume of researchers who can cite a paper.”

Blaabjerg said that many areas of engineering were also driven by conferences “in order to present things fast and first,” and papers submitted to such events may not be cited in quite the same way.

This is a point that tallies with the data analysis if conference papers are removed and only original or review articles are counted. In this case, industrial and manufacturing engineering has a much lower uncited rate for 2012 papers -- 29 percent -- while the engineering subdiscipline with the highest rate becomes aerospace engineering (30 percent).

Naturally, the uncited rate falls across most subjects once conference papers and other publications less likely to receive citations, such as editorials, are removed.

However, even with this approach -- which is one that has been favored in other recent attempts to quantify rates of uncited scholarship -- there are still 12 disciplines, again mainly in the arts and humanities, in which more than half of papers were uncited after five years.

Even with the caveats about the citation patterns seen in different disciplines, there is a danger that such figures could be seized upon by those wanting to question the value of publicly funded research.

Certainly, funders are wary of this possibility. Recent moves such as the decision of Britain's research councils to back an international declaration on the responsible use of metrics suggest a wider drive to represent impact as more than just citation counts.

“The research community is growing ever more conscious about the limits of citation metrics as proxies for quality or impact,” said Barnes. “Research impact is often complex and citations alone will not tell the full story that the effect a piece of research has on academia and on wider society.”