You have /5 articles left.

Sign up for a free account or log in.

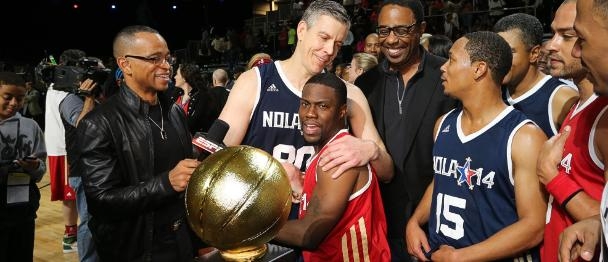

Arne Duncan (center) receiving the MVP trophy at the NBA celebrity all-star game.

NBA

Dear Secretary of Education Arne Duncan:

Congratulations on your MVP award at the NBA Celebrity All-Star game: 20 points, 8 boards, 3 assists and a steal -- you really filled up that stat sheet. Even the NBA guys were amazed at your ability to play at such a high level -- still. Those hours on the White House court are paying off!

Like you, I spent some time playing overseas after college and have long been a consumer of basketball box scores -- they tell you so much about a game. I especially like the fact that the typical box score counts assists, rebounds and steals — not just points. I have spent many hours happily devouring box scores, mostly in an effort to defend my favorite players (who were rarely the top scorers).

As a coach of young players, my wife Michele and I (she is the real player in the family) expanded the typical box score — we counted everything in the regular box score, then added “good passes,” “defensive stops,” “loose ball dives” and anything else we could figure out a way to measure. This was all part of an effort to describe for our young charges the “right way” to play the game. I think you will agree that “points scored” rarely tells the full story of a player’s worth to the team.

Mr. Secretary, I think the basketball metaphor is instructive when we “measure” higher education, which is a task that has taken up a lot of your time lately. If you look at all the higher education “success” measures as a basketball box score instead of a golf-type scorecard, it helps clarify two central flaws.

First, exclusivity. Almost every single higher education scorecard fails to account for the efforts of more than half of the students actually engaged in “higher” education.

At Mount Aloysius College, we love our Division III brand of Mountie basketball, but we don’t have any illusions about what would happen if we went up against those five freshman phenoms from Division I Kentucky (or UConn/Notre Dame on the women’s side) -- especially if someone decided that half our points wouldn’t even get counted in the box score.

You see, the databases for all the current higher education scorecards focus exclusively on what the evaluators call “first-time four-year bachelor’s-degree-seeking students.” Nothing wrong with these FTFYBDs, Mr. Secretary, except that they represent less than half of all students in college, yet are the only students the scorecards actually “count.”

None of the following “players” show up in the box score when graduation rates are tabulated:

- Players who are non-starters (that is, they aren’t FTFYBDs) — even if they play every minute of the last three quarters, score the most points and graduate on time. These are students who transfer (usually to save money, sometimes to take care of family), spring enrollees (increasingly popular), part-time students and mature students (who usually work full-time while going to school).

- Any player on the team, even a starter, who has transferred in from another school. If you didn’t start at the school from which you graduated, then you don’t “count,” even if you graduate first in your class!

- Any player, even if she is the best player on the team, who switches positions during the game: Think two-year degree students who switch to a four-year program, or four-year degree students who instead complete a two-year degree (usually because they have to start working).

- Any player who is going to play for only two years. This is every single student in a community college and also graduates who get a registered-nurse degree in two years and go right to work at a hospital (even if they later complete a four-year bachelor’s degree, they still don’t count).

- Any scoring by any player that occurs in overtime: Think mature and second-career students who never intended to graduate on the typical schedule because they are working full time and raising a family.

The message sent by today’s flawed college scorecards is unavoidable: These hard-working students don’t count.

Mr. Secretary, I know that you understand how essential two-year degrees are to our economy; that students who need to transfer for family, health or economic reasons are just as valuable as FTFYBDs, and that nontraditional students are now the rule, not the exception. But current evaluation methods are almost universally out-of-date with readily available data and out of synch with the real lives of many students who simply don’t have the economic luxury of a fully financed four-year college degree. All five types of students listed above just don’t show up anywhere in the box score.

“Scorecards” should look more like box scores and include total graduation rates for both two- and four-year graduates (the current IPEDS overall grad rate), all transfer-in students (it looks like IPEDs may begin to track these), as well as transfer-out students who complete degrees (current National Student Clearinghouse numbers). These changes would provide a more accurate result for the student success rate at all institutions.

Another relatively easy fix would be to break out cohort comparisons that would allow Scorecard users to see how institutions perform when compared to others with a similar profile (as in the Carnegie Classifications).

The second issue is fairness.

Current measurement systems make no effort to account for the difference between (in basketball terms) Division I and Division III, between “highly selective schools” that “select” from the top echelons of college “recruits” and those schools that work best with students who are the first in their families to go to college, or low-income, or simply less prepared (“You can’t coach height,” we used to say).

As much as you might love the way Wisconsin-Whitewater won this year’s Division III national championship (last-second shot), I don’t think even the most fervent Warhawks fan has any doubt about how they would fare against Coach Bo Ryan’s Division-I Wisconsin Badgers. The Badgers are just taller, faster, stronger — and that’s why they’re in Division I and why they made it to the Final Four.

The bottom line on fairness is that graduation rates track closely with family income, parental education, Pell Grant eligibility and other obvious socioeconomic indicators. These data are consistent over time and truly incontrovertible.

Mr. Secretary, I know that you understand in a personal way how essential it is that any measuring system be fair. And I know you already are working on this problem, on a “degree of difficulty” measure, very like the hospital “acuity index” in use in the health care industry.

The classification system that your team is working on right now could assign a coefficient that weighs these measurable mitigating factors when posting outcomes. Such a coefficient would also help to identify those institutions that are doing the best job at serving these very students. Let us hope that your team can successfully weigh measurable mitigating factors to more fairly score schools. This also would help identify those institutions that are doing the best job at serving the students with the fewest advantages.

In the health care industry, patients are assigned “acuity levels” (based on a risk-adjustment methodology), numbers that reflect a patient’s condition upon admission to a facility. The intent of this classification system is to consider all mitigating factors when measuring outcomes and thus to provide consumers accurate information when comparing providers. A similar model could be adopted for measuring higher education outcomes.

This would allow consideration of factors like (1) Pell eligibility rates, (2) income relative to poverty rates, (3) percentage that are first-generation-to-college, (4) SAT scores, etc. A coefficient that factors in these “challenges” could best measure higher education outcomes. Such “degree of difficulty” factors, like “acuity levels,” would provide consumers accurate information for purposes of comparison.

Absent such a calculation, colleges will continue to have every incentive to “cream” their admissions, and every disincentive against serving the students you have said are central to our economic future, including two-year, low-income and minority students. That’s the “court” that schools like Mount Aloysius and 16 other Mercy colleges play on. We love our FTFYBDs, but we work just as hard on behalf of the more than 50 percent of our students whose circumstances require a less traditional but no less worthy route to graduation. We think they count, too.

Thanks for listening.

Thomas P. Foley

President

Mount Aloysius College