You have /5 articles left.

Sign up for a free account or log in.

AAC&U's VALUE approach to student learning.

AAC&U

Meaningful assessment of student learning, beyond tests and grades, befuddles even seasoned educators. Are students really absorbing what they’re being taught, and will they remember it later on? How can that be measured and compared nationally? Those questions, among others, drive the work of the Association of American Colleges and Universities, which today released a report on what it calls a “groundbreaking approach” to assessing student learning.

“This project represents the first attempt to develop a large-scale model for assessing student achievement across institutions that goes beyond testing,” Lynn Pasquerella, president of AAC&U, said in a statement. She the called preliminary data on which the report is based “encouraging,” and promising in terms of improving educational quality and equity.

The report, “On Solid Ground,” includes results from the first two years of AAC&U’s national Valid Assessment of Learning in Undergraduate Education (VALUE) initiative. It’s something of a portrait of student performance in critical thinking, written communication and quantitative literacy. Educators and employers agree all are essential for student success in the workplace and in life, according to AAC&U.

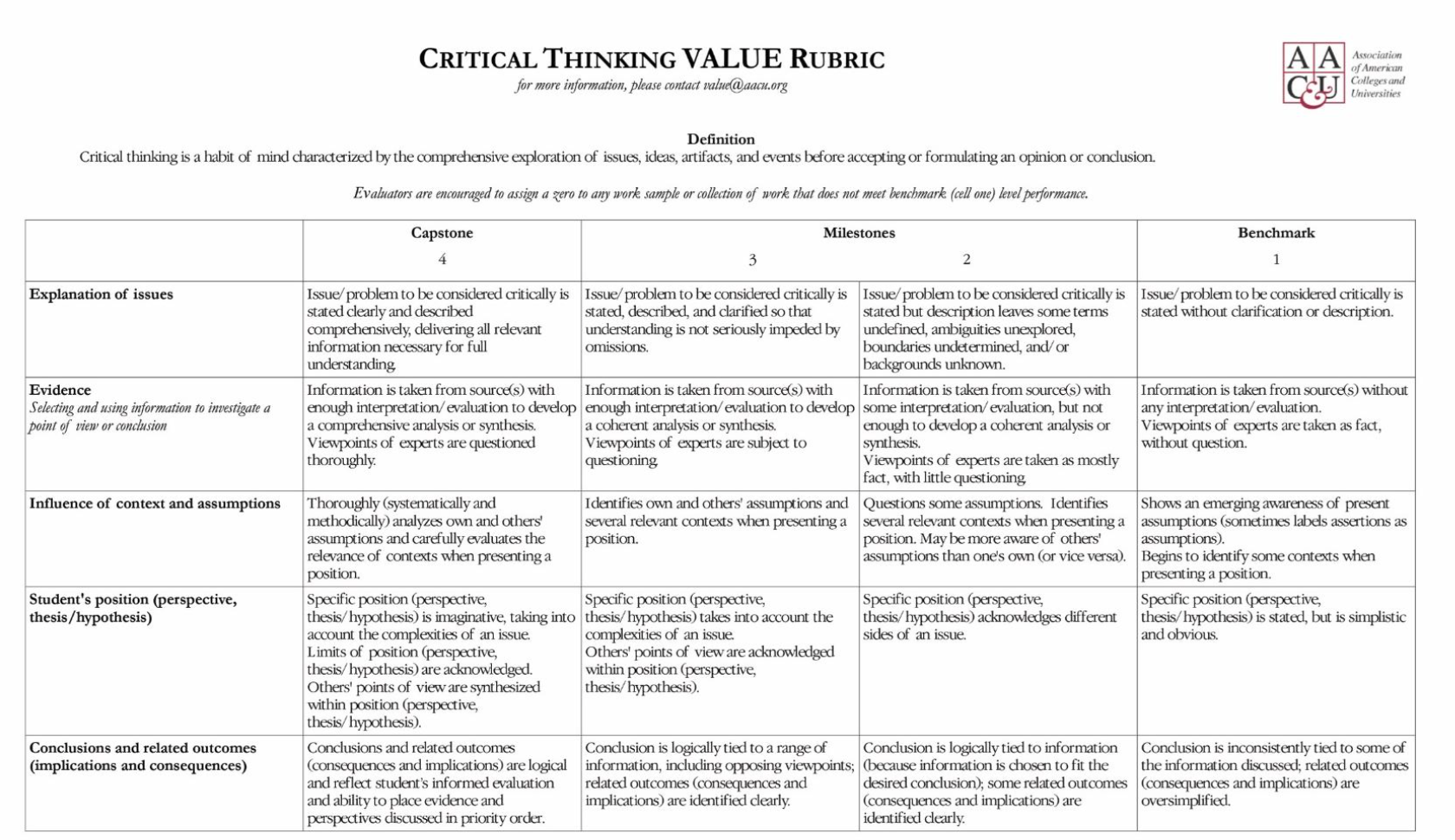

Professors from 92 public and private associate and bachelor’s degree-granting institutions across a range of competitiveness uploaded approximately 21,000 samples of student work to a web-based platform. Some 288 trained educators from across disciplines then scored the work on a scale of zero to four, using AAC&U’s previously released VALUE rubrics in the key areas. About one-third of the samples were scored twice, to ensure consistency.

Here's a sample rubric:

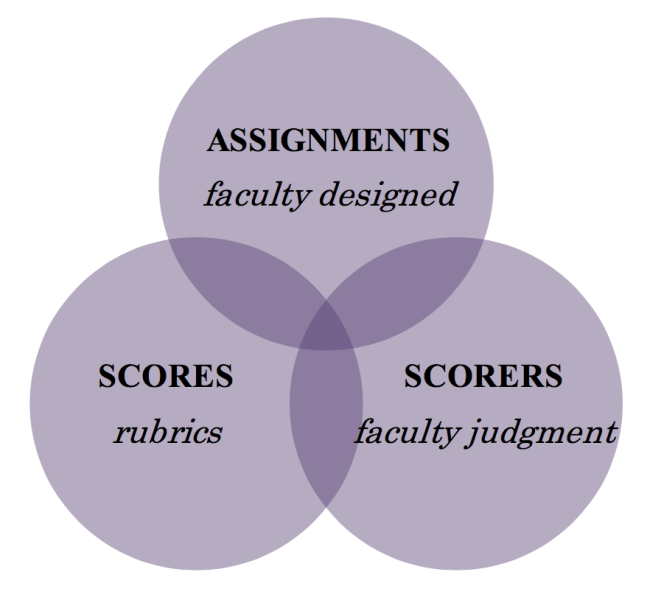

The VALUE approach tries to get at student learning in ways that standardized tests or other assessment practices don’t by “embracing” complexity instead of trying to eliminate or rejecting it. So rather than something “divorced from the curriculum,” the report says, student assessments included in the initiative were all designed by professors in an actual college course.

All work came from those students who had completed 75 percent of more of the required coursework for an associate or bachelor’s degree. The students’ samples were supposed to show some of their best, most motivated work, to see how much they’d learned thus far in their studies.

Lots of Critical Thinking, but Room for More

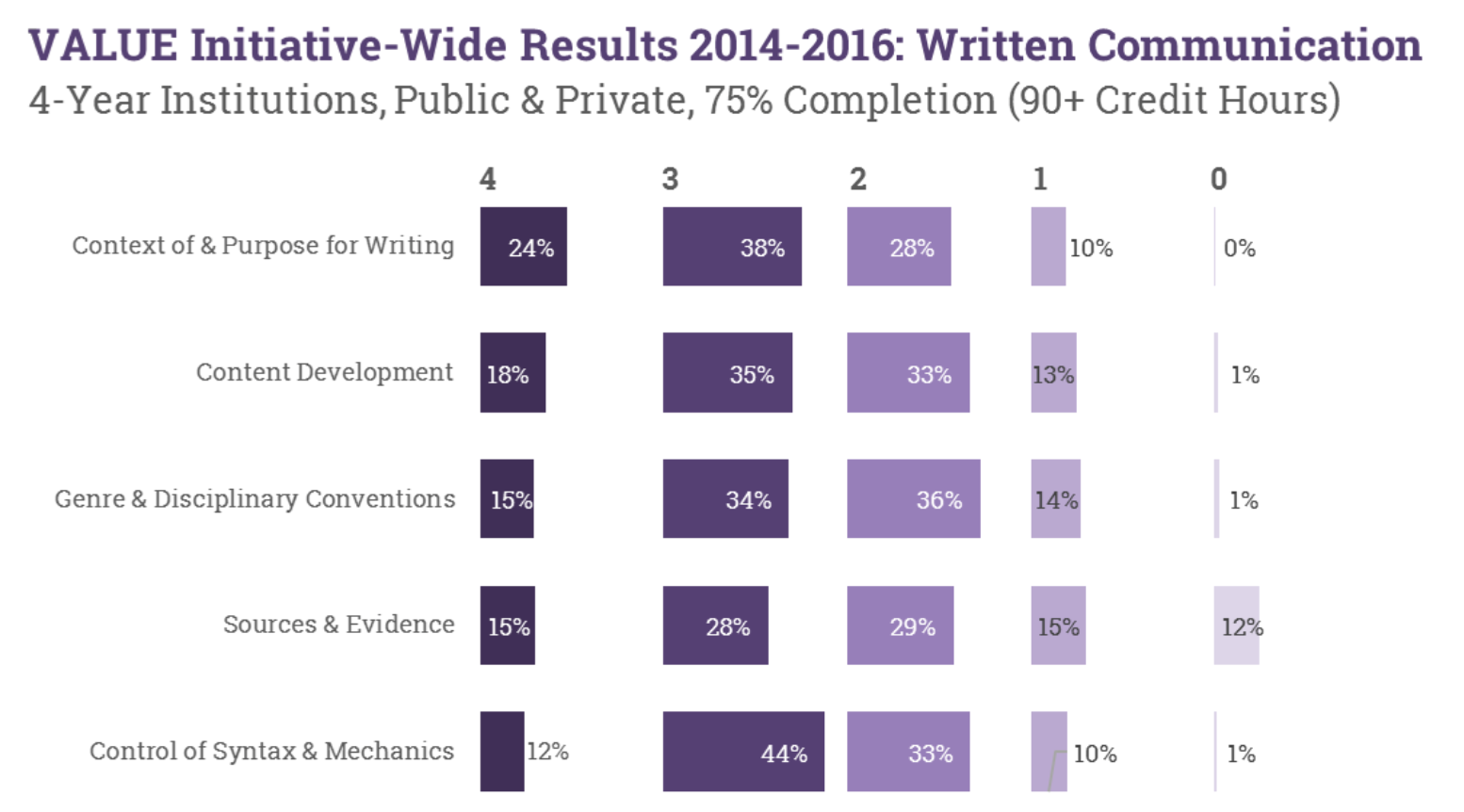

Key findings include that the strongest student performance was in written communication. It’s good news for the many institutions that have in recent decades focused on improving student writing. Yet the study also revealed that students still struggle to use evidence to support their written arguments.

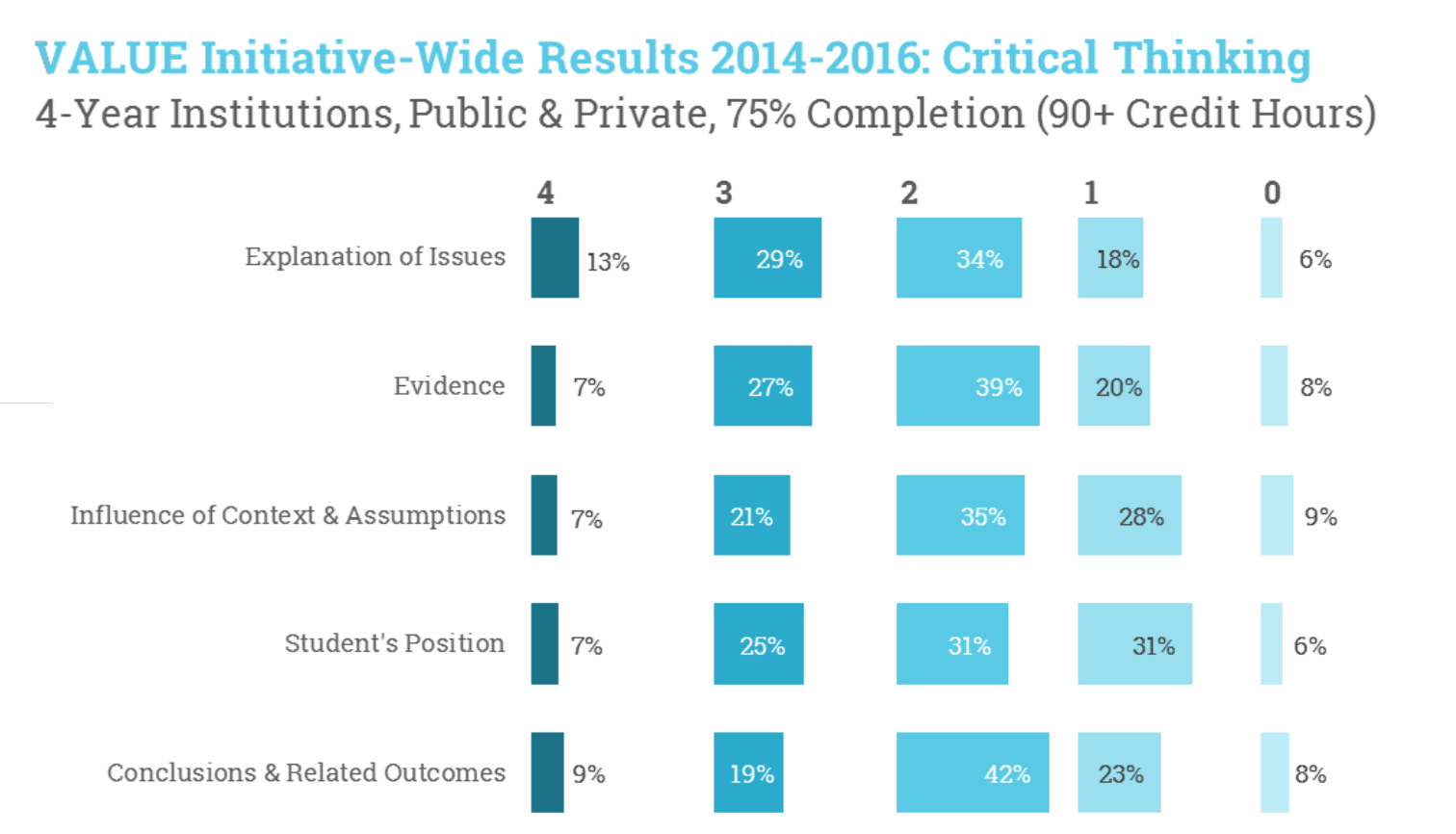

Regarding critical thinking, students tended to explain issues well and present related evidence. However, the study says, students have more trouble “drawing conclusions or placing the issue in a meaningful context (i.e., making sense out of or explaining the importance of the issue studied).”

The curricular focus on developing critical thinking skills in students through their major programs, which faculty claim is a priority, according to the study, “is reflected in the higher levels of performance among students in upper division course work in the majors.”

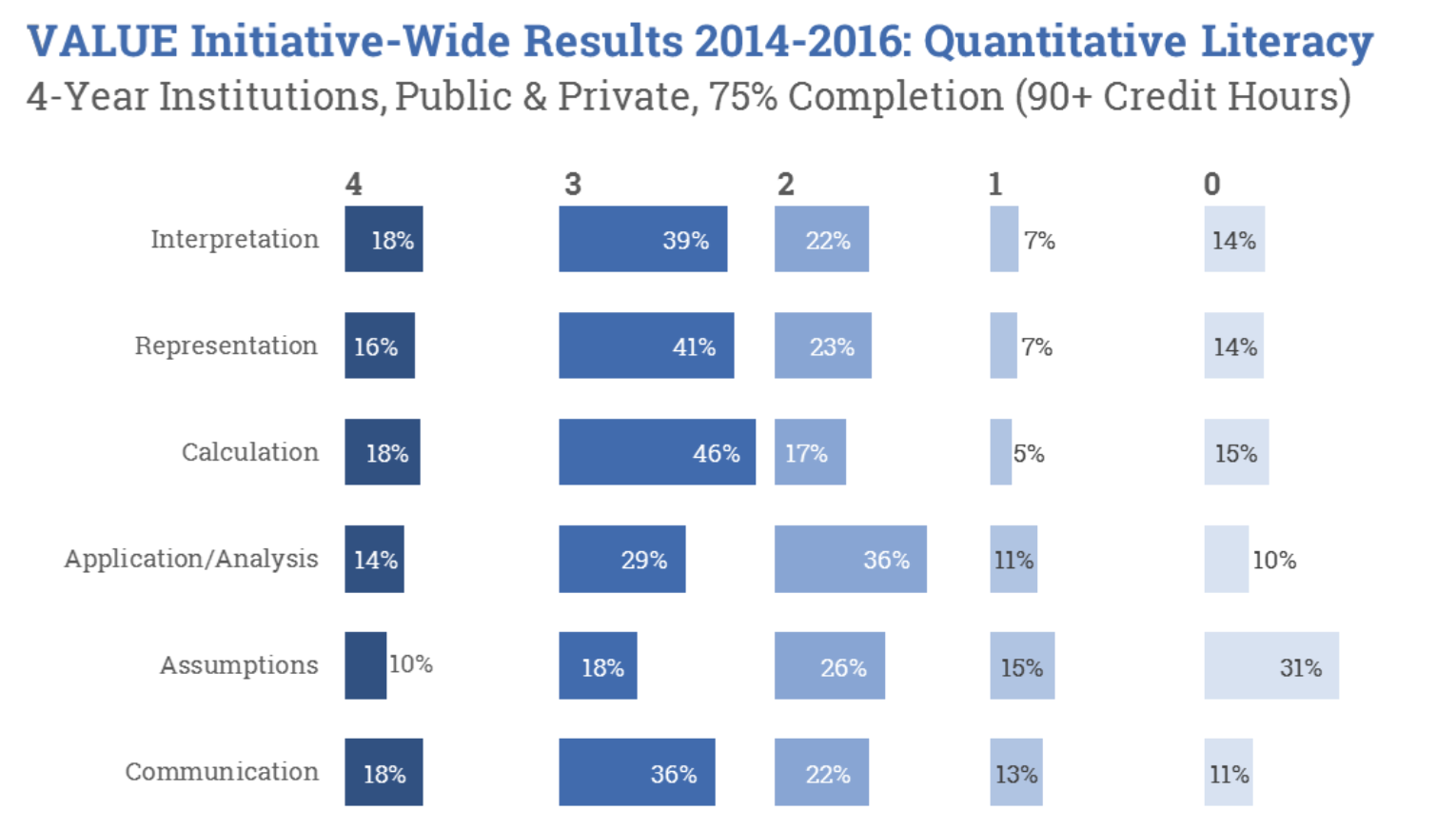

Students showed strength in calculating and interpreting data. Their quantitative skills were generally weaker, however, when it came to making assumptions and applying their knowledge. Such results suggest that students are getting the mechanics of math and related skills, but not so much the “why,” or when and where to use certain calculations, according to AAC&U.

"In a world awash in data,VALUE generates evidence — evidence that points to what is working well and, critically, where there is room for improvement," AAC&U asserts. "It empowers faculty as both disciplinary and pedagogical experts, yet at the same time challenges faculty to interrogate their own teaching practices and assumptions about how their students in particular come to master important knowledge, skills, and abilities within the context of their classes. If faculty are truly the owners and arbiters of the curriculum at each institution, they — in partnership with their students — must also own the learning."

Achievement Levels

Students at four-year institutions who had completed 90 credit hours showed higher average achievement levels than students at two-year institutions who had completed 45 credit hours, the report says, “suggesting that the continued focus on core essential learning outcomes (e.g., Writing Across the Curriculum, upper-division writing-intensive courses or upper-division courses that require thinking critically within the major) supports enhanced levels of higher-order achievement across the three learning outcomes."

Assignments themselves were important, too, as early results point “in several ways to the importance of the assignments in students’ abilities to demonstrate higher, second-order quality work,” reads the report. “What institutions ask their students to do makes a difference for the quality of the learning.”

Scorers of student worked weighed in on the validity of the rubrics, and reported that they covered the “core dimensions of learning” in each of the learning outcomes. They also said the rubrics could be used for judging quality of learning in different courses in different fields by faculty from different departments — a testament to the transferability of the initiative to institutions beyond the pilot group.

Regarding reliability, there was generally agreement among raters on student scores. Weighted percent agreement ranged from 84 percent on some dimensions of quantitative literacy to 94 percent on some dimensions of written communication.

“A key feature of our assessment strategy is the scoring of authentic student work using a common rubric, which the AAC&U VALUE rubrics provide,” said David Switzer, faculty fellow for assessment and associate professor of economics at St. Cloud State University. “Our participation in the [collaborative] has given us both the knowledge and the capacity to assess student work from all across the university, and shed light on how to assess student learning in co-curricular programs.”

The report notes that policymakers want to know more about student learning, too. That’s potentially concerning to professors who worry about assessment data being used to make decisions about funding or other matters that may not actually benefit the institution. Yet some professors involved in the study said it helped take some pressure off instructors.

“On our campus, in particular, we have used the VALUE rubrics as models to launch discussions as we ask faculty to work toward articulating a shared understanding of what it means to be teaching courses that fulfill our distribution requirements,” Alexis D. Hart, associate professor of English and director of writing at Allegheny College, said in the report. “These discussions have really changed the tenor of assessment from one of policing faculty teaching practices to enriching conversations about teaching and learning and how assessment can inform those conversations.”

Looking ahead, AAC&U is focused on disaggregating data based on student characteristics, such as whether they’re from low-income families.

“The ongoing VALUE initiative puts learning outcomes quality and improvement in the hands of state and institutional leaders, faculty, and students — exactly where it needs to be if educators and policy makers are serious about preparing graduates for success beyond the first job and in their personal, civic and social lives, regardless of what type of institution they attend,” the report says.

AAC&U worked together on the VALUE initiative with the State Higher Education Executive Officers, the Multi-State Collaborative to Advance Quality Student Learning, the Minnesota Collaborative and the Great Lakes Colleges Association Collaborative, along with participating institutions. Taskstream is the project's technical partner.

The Bill & Melinda Gates, Spencer, Sherman Fairchild, Lumina and State Farm Companies Foundations all funded the initiative, along with the Fund for the Improvement of Postsecondary Education and the U.S. Education Department.

'A Win for All Parties'

Carol Rutz, director of the College Writing Program at Carleton College, appreciates the complexities of assessing student learning, in part by having co-written the 2016 book, Faculty Development and Student Learning: Assessing the Connections. Carleton wasn’t involved in the VALUE study, but Rutz was on the national team that developed the initial VALUE rubric for written communication. She said she was initially “dubious” that AAC&U's rubrics were being tested as benchmarks, since she’d argued that local context matters “more than ratings derived from a national, generic instrument” — what many object to about standardized tests.

Now, though, Rutz said, “I can better appreciate what the study offers.” She called the VALUE initiative’s strength its design, in that rubrics were taught to faculty members from participating institutions, the material that was rated was coded and distributed among readers, and the ratings were analyzed “with clear awareness of the limitations.” AAC&U cautions, for example, that the preliminary data "are not generalizable beyond the three individual VALUE Collaboratives," and that extrapolating meaning and "making inferences about the quality of learning at the state or national level are entirely inappropriate at this time."

Professors “reading genuine student work shows that student products can be assessed outside of the classroom situation in a responsible way, thanks in large measure to qualified readers,” Rutz added. Better yet, “the data point toward the necessity for considering the assignment as well as the student work itself.” Indeed, that's a point her recent book makes, and part of Carleton's portfolio assessment that has provided, in Rutz's words, successful, iterative faculty development on assignment design.

“If the planets had aligned favorably, I would have jumped at the chance to see how Carleton students' work stacks up. For now, I look forward to hearing more about the VALUE study, including faculty development implications."

Terrel Rhodes, vice president of quality, curriculum and assessment and executive director of VALUE at AAC&U, said grades were long thought to be good measures of learning, and they still are — except that they rely heavily on content mastery. Increasingly, he said, accreditors, employers and others want to see that students have learned “transferable” skills, not just content.

“If we have seen anything in recent years [in] change and news, challenging issues increase in occurrence,” Rhodes said. “We see how incredibly important it is for students to not only know things, but know what to do with what they know.” They also need to know where to look when they don't know something, he added.

As for VALUE, he said, “AAC&U was in right place at right time, with a strong frame and tools that faculty and administrators and employers accepted.”

Natasha Jankowski, director of the National Institute for Learning Outcomes Assessment and a research assistant professor of education policy, organization and leadership at the University of Illinois at Urbana-Champaign, called VALUE “a wonderful shift away from assessment as a compliance or reporting exercise to one that is embedded in the lived experiences of faculty.”

By making clear connections to assignments in the classroom, she said, policy makers are able to get a “better picture of student learning, while faculty receive meaningful information that can be used to revise teaching and learning strategies in ways that benefit students.This is a win for all parties involved and one that positions policy makers to better communicate with institutions on measures of importance to both parties.”