You have /5 articles left.

Sign up for a free account or log in.

iStock

Each year, more colleges announce that they are ending requirements that applicants submit SAT and ACT scores -- joining hundreds of others in the "test-optional" camp. Just this week, Augsburg University in Minnesota made such a shift. The university's announcement said that the policy had strong faculty support and was seen as likely to boost the diversity of the student body. High school grades in college preparatory courses are the key to good admissions decisions, said officials there, just as their peers have said at many other institutions.

But even as more colleges drop the testing requirements, the College Board has insisted evidence backs its view that the best way to predict college success is to review both grades and test scores. Measuring Success: Testing, Grades and the Future of College Admissions, published by Johns Hopkins University Press in January, was a major salvo in the debate. Essays in the book (edited by three scholars with ties current or past to the College Board) described research that generally questioned test-optional policies. The policies, the book argued, have failed to add to diversity -- or at a minimum have not led to increases in diversity that outpace gains at institutions with testing requirements. Further, the book highlighted research on high school grade inflation, which some see as an argument for standardized testing. (Of course, others don't.)

Studying the impact of test-optional policies isn't easy, say both supporters and critics of the policy, because control groups are hard to come by. A college dropping testing requirements as part of an effort to attract minority students is likely doing multiple things with that goal in mind. And shifts having nothing to do with testing can depress applications or change the pool.

But despite these challenges, the College Board (as evidenced by the new book) and skeptics of testing continue to study the impact of test-optional policies.

A major such study was released Thursday -- offering data from 28 colleges and universities and 955,774 applicants over multi-ear periods for each of those institutions. The institutions studied were all four-year institutions with test-optional policies, and they were compared to peer institutions that require testing. The report, which generally supports test-optional policies, is by Steve Syverson, assistant vice chancellor for enrollment management at the University of Washington at Bothell; Valerie W. Franks, a consultant; and William C. Hiss, a former dean of admissions at Bates College who has been a longtime proponent and scholar of test-optional policies.

The conclusion of the new report says the findings show that tests indeed fail to identify talented applicants who can succeed in higher education -- and that applicants who opt not to submit scores are in many cases making wise decisions. The test-optional movement, they write, reflects a broader shift in society away from "a narrow assessment" of potential.

Among the findings from the sample studied:

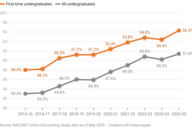

- The years following adoption of a test-optional policy saw increases in the total number of applications -- by an average of 29 percent at private institutions and 11 percent at public institutions.

- While the degrees varied, institutions that went test optional saw gains in the numbers of black and Latino students applying and being admitted to their institutions.

- About one-fourth of all applicants to the test-optional colleges opted not to submit scores. (The colleges studied all consider the SAT or ACT scores of those who submit them.)

- Underrepresented minority students were more likely than others to decide not to submit. Among black students, 35 percent opted not to submit. But the figure was only 18 percent for white students. (Women were more likely than men to decide not to submit scores.)

- "Non-submitters" (as the report termed those who didn't submit scores) were slightly less likely to be admitted to the colleges to which they applied, but their yield (the rates at which accepted applicants enroll) was higher.

- First-year grades were slightly lower for nonsubmitters, but they ended up highly successful, graduating at equivalent rates or -- at some institutions -- slightly higher rates than did those who submitted test scores. This, the report says, is "the ultimate proof of success."

The College Board referred questions about the new report to Jack Buckley, a former College Board official who is one of the co-editors of Measuring Success.

Buckley said he found the evidence in the report to be "all over the place," with some examples backing test-optional policies and others not. He said the study lacked a sufficiently rigorous way to determine the impact of going test optional.

Most liberal arts colleges (a sector that has been quick to embrace test-optional admissions) have been getting more diverse in recent years, Buckley said. He said he wasn't questioning the data reported by the colleges studied, but the ability to establish causation. Of the diversity reported in the study, he said, "that doesn't mean that test optional caused it."

But Akil Bello, who as director of equity and access for the Princeton Review works with low-income and minority students in New York City and nationally, said the findings rang true to him.

Bello said he believes standardized tests fail to measure the potential of many minority and low-income students, leaving them at a disadvantage in being considered for admission. Further, he said, testing costs money, and it can be expensive for low-income applicants.

And he said that colleges send an important, encouraging message to such students by going test optional.

"When a college announces a test-optional policy, it also conveys to students that the college is aware of and sensitive to issues that impact low-income and underrepresented students and this awareness can signal to applicants an aware and inviting institutional culture," Bello said.