You have /5 articles left.

Sign up for a free account or log in.

iStock

A 2015 study found that “social inequality” across a range of disciplines was so bad that just 25 percent of Ph.D. institutions produced 71 to 86 percent of tenured and tenure-track professors, depending on field.

The effect was more extreme the farther up the chain the researchers looked, based on their own program ranking system: the top 10 programs in each discipline produced 1.6 to three times more faculty than even the next 10 programs. The top 11 to 20 programs produced 2.3 to 5.6 times more professors than the next 10. In theory, this reflects the quality of those programs. But critics say in-group hiring is also about snobbery.

Now computer scientists at the University of Colorado at Boulder who led that earlier study say academic pedigree isn’t destiny after all -- at least in terms of future productivity.

“Our results show that the prestige of faculty’s current work environment, not their training environment, drives their future scientific productivity,” says the new paper in Proceedings of the National Academy of Sciences. Current and past locations, meanwhile, "drive prominence.”

That is, when it comes to actual research output, where one works is more important than where one trained.

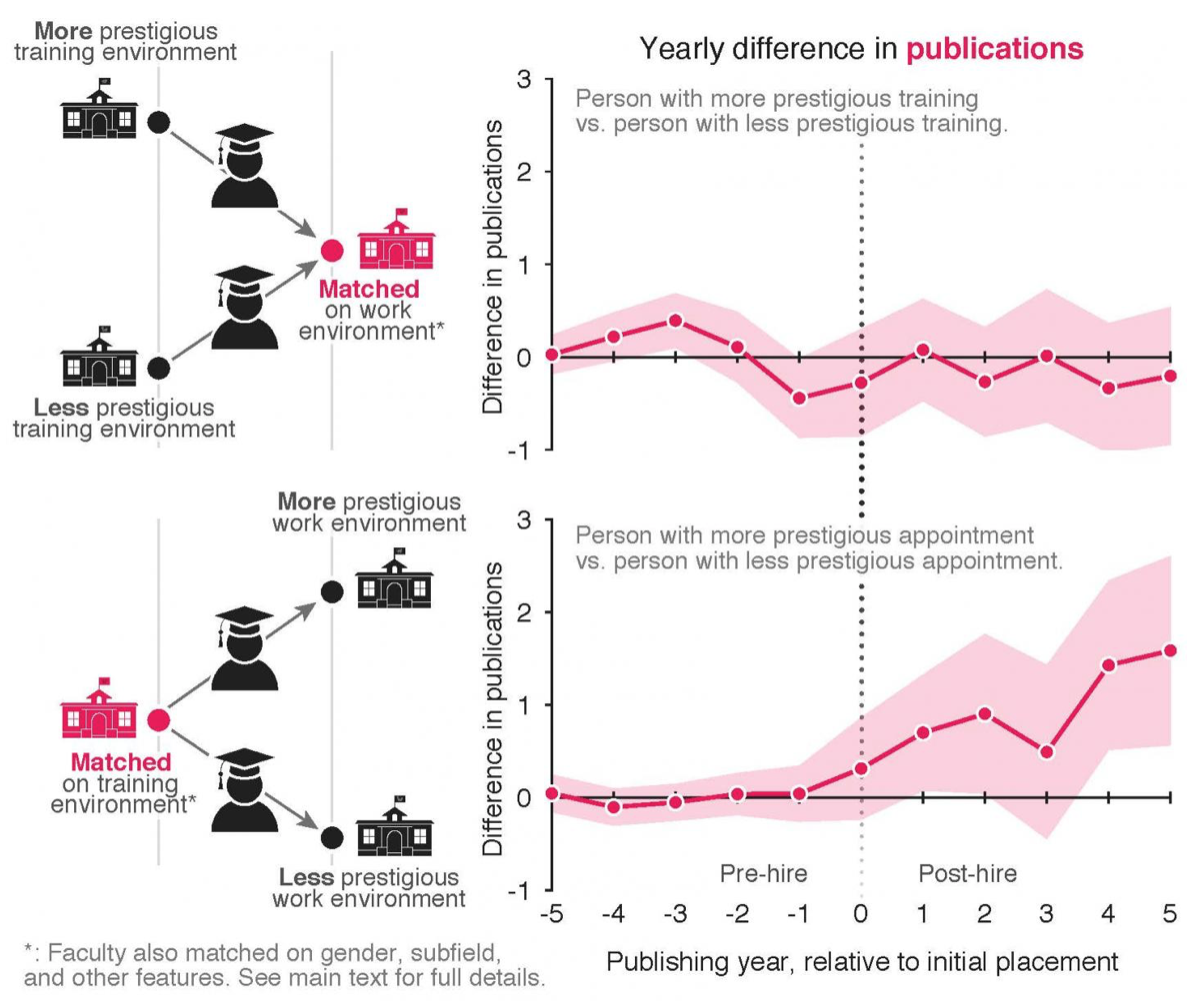

For this new study, researchers looked at productivity and prominence (measured in number of published papers and scholarly citations, respectively) for 2,453 tenure-line faculty members in 205 Ph.D.-granting computer science departments. The analysis was based on a matched-pairs experimental design. As opposed to a completely randomized design, matched pairs involve one binary factor and blocks that sort the experimental units into pairs.

The relevant time period was five years before and five years after the Ph.D.s’ first faculty appointments. The professors together accounted for over 200,000 publications and 7.4 million citations.

Regarding prominence, Ph.D.s from more prestigious programs tended to continue to accumulate citations from their work as trainees (similar to the 2015 paper, prestige here was based on an original, placement-based ranking system). But the prestige of the training programs played little to no role in how many papers the Ph.D.s wrote after their faculty placements.

For matched pairs of faculty members with appointments at similarly prestigious institutions, the person with the more prestigious Ph.D. pedigree was not more productive in the first five years posthire. That person did receive 301 more citations, on average, however.

By comparison, among matched pairs of professors with similarly prestigious training and with similar productivity and prominence, the person with the more prestigious appointment wrote 5.1 more papers during the first five years posthire. Those with more prestigious appointments also received 344 more citations, on average.

Professors at the top 20 percent of institutions in the ranking produced, on average, 17 more publications in their first five years and got 824 more citations than the faculty members at the bottom 20 percent of institutions.

Source: Samuel Way

The new study’s lead author, Samuel Way, a postdoctoral researcher in computer science at Boulder who earned his doctorate there, has said that if both he and a Ph.D. from a top program such as the Massachusetts Institute of Technology ended up at, say, computer science powerhouse Stanford University as professors, their research output would be the same, based on the analysis.

Why is that? Way and his co-authors -- Aaron Clauset, associate professor; Allison C. Morgan, Ph.D. candidate; and Daniel B. Larremore, assistant professor, all computer scientists at Boulder -- considered hiring criteria, such as productivity during the Ph.D., along with program expectations for faculty and retention of productive professors. There was only weak evidence for each, however. Prestige of the current program was strongly correlated with productivity.

The findings “have direct implications for research on the science of science, which often assumes, implicitly if not explicitly, that meritocratic principles or mechanisms govern the production of knowledge,” the paper says. “Theories and models that fail to account for the environmental mechanism identified here, and the more general causal effects of prestige on productivity and prominence, will thus be incomplete.”

Asked whether they thought their results might hold across disciplines, Way and Clauset said in a joint email that past work -- including their own -- suggests that most other disciplines have similar patterns in faculty hiring and in productivity, "so we see no reason not to expect similar effects across fields due to environment."

That said, Way and Clauset added, the degree to which the results hold in other fields "likely comes down to whether the underlying mechanisms that drive the observed correlates of productivity in computer science also hold in those fields.” For example, a scholar's productivity could be "directly affected by access to institutional resources than it is in computer science -- things like laboratory equipment and supercomputers for biologists, libraries for historians, etc.” Advising and publishing norms, such as whether Ph.D. students tend to be co-author papers with their advisers, may also matter.