You have /5 articles left.

Sign up for a free account or log in.

Lee Coffin, Dartmouth’s dean of admissions and financial aid, expounds on the college’s reasoning for reinstating standardized testing in admissions.

Dartmouth College

Dartmouth College this week became the first Ivy League institution to reinstate standardized testing requirements for applicants.

The decision has prompted another round of debate over the pros and cons of testing in admissions and—as most selective colleges approach the expiration date of their pandemic-era test-optional policies—a flurry of speculation about whether Dartmouth’s peers will fall in line.

Lee Coffin, Dartmouth’s dean of admissions and financial aid—a position created for him in 2016, when he joined the institution from Tufts University—has been anticipating this moment for years. What started as an emergency suspension of test requirements dragged on into first one extension, then another and another. When Dartmouth’s new president, Sian Beilock, started the job last summer, Coffin said evaluating the testing question was a top priority.

Coffin spoke with Inside Higher Ed about what led to the decision, how his thinking on the issue has evolved over 30 years in admissions and whether he sees Dartmouth as a bellwether for other colleges re-evaluating their testing policies.

Excerpts of the conversation follow, edited for clarity and concision.

Q: Dartmouth is the first Ivy League institution to take this path at a pivotal moment for testing, as colleges reconsider the near-universal adoption of test-optional policies during the pandemic. How have you thought about the weight this decision carries throughout higher ed?

A: We made the decision to be test optional in a spontaneous moment as the pandemic really took hold and we were witnessing in real time the shift of all sorts of norms related to our work, whether it was travel or personal things on campus, and certainly elements of the application. If you go all the way back to June 2020, when we announced we would pause the policy, I used the word “pause” intentionally then, because it was my sense that this was a temporary decision and that we would return to our required policy as the pandemic allowed. Who knew that was going to end up being a three-year cycle of extensions? This year, we’re recommending students submit scores because we value having another piece of information.

We were really deliberate in the way we made this announcement yesterday to emphasize that we looked at this through the prism of Dartmouth College alone. The faculty looked at our data and gave me a recommendation about our admission process. We did not see this decision at Dartmouth as a more universal truth that everybody must follow. I think there’s lots of schools—many of my peers since I was at Tufts and Connecticut College before that—have been test optional for decades, and they do it well and it’s integral to the way they may read and evaluate their class. And there are others that have maintained testing or, like MIT, reactivated it because they saw a local rationale to do it. I would put Dartmouth in that latter category.

Q: Was recommending testing versus taking a neutral stance new for you this cycle?

A: Yes. For the Classes of 2025, ’26 and ’27, we were optional, and the language for everybody was “Access to testing remains uneven, so include testing or not as your situation allows.” And what started to happen in the third year is we started hearing from [high] school counselors that most of the students in their class had access to testing again, but now the question had shifted to, “Should I or should I not include my scores?” Which for us was never really the point of the pause. That was a public health stance, not a critique of testing. So as we went into this fourth cycle, we said, “Let’s recommend it.” That was a clue that we were leaning towards a reactivation.

Q: So why re-evaluate and require it, instead of keeping the recommendation policy?

A: I had a new president [former Barnard College president Sian Beilock] start last June, and part of my goal with the testing policy was, I wanted the new president to be able to think about this before I just made a decision. And so my first conversation with her a year ago was “This is coming. How would you like to consider it?” And she said, “I like evidence-based policies, so let’s gather some data.” She reached out to a group of faculty in educational policy—you’ve probably seen the study they produced—and that informed our decision. So that path was really deliberate. I said to the president, and then to the faculty researchers, that this feels like an institutional question, not a singular admission question. While we paused during the pandemic without great care or consultation, I wanted to make sure that this next step was fully considered. So their report, which came back with a strong recommendation to reactivate [testing requirements], for me was an important proof point.

Q: It sounds like this was always conceived as a pause. How much was a return to requiring scores a foregone conclusion? Were you and other leaders at the university open to a different way forward?

A: I would describe myself as agnostic as we headed into the study; I didn’t share a personal point of view with the faculty. I let them study it and guide the conversation. And if they’d come back at the end of their study and said, “There’s clear evidence that optional is powerful in a really important way,” I think we would have gone into that conversation with all of the campus faculty committees and thought about it more. Did I have a sense, though? Yeah, my instinct was that testing was valuable when present. And I was starting to see unanswered questions continue to emerge for candidacies where there was no testing, and the lack of information gave us pause. So the report when it came back didn’t surprise me. When I read it for the first time, I thought, this syncs up with my lived experience as an admission officer.

Q: This has obviously been a contentious issue for decades, and you’ve been in the field for a long time …

A: Oh, since I was a baby in the field. This has been a contentious issue since I was a first-year admission officer [at Connecticut College]. I mean, I think standardized testing is an ongoing source of conversation and people have strong disagreements about it. And I think there’s a place for all of that and valid arguments on both sides.

Q: I take your point, but is there any kind of recognition, even in hindsight, of the pivotal nature of this decision? The coverage in The New York Times, and the fact that this debate is reigniting just as lots of selective colleges are trying to decide on their own policies, makes it hard to conceive of Dartmouth’s decision in a vacuum. Did you have a sense that, well, maybe this is going to have an impact beyond our campus whether we want it to or not?

A: This would be a better question for my president, but I will speak for her for a second. Yes, she definitely saw a leadership moment, and she wanted to stand with data. I’ve heard her say, “We are being guided by evidence and having policy informed by data.” So I think the fact that The New York Times covered this right out of the gate was a signal to the research that had been happening, that David Leonhardt was already covering. And Bruce Sacerdote, who was the lead researcher on our team, was very connected to David and to the studies by Raj Chetty at Harvard and John Friedman at Brown. So there was a network among the researchers that pre-existed the reactivation conversation.

But the thing I’ve learned is that when you make a move in this Ivy space, people notice. I’m not saying that in any kind of exaggerated or elitist way, but you can’t make the decision without expecting attention. I think to the degree that our study helps other places think, well, what other questions should we be asking? That’s something I hope for.

The finding [in the Dartmouth study] that I found most provocative when I first read it was the point that testing expands access. And when it’s absent, it creates a hesitation that is unintentional. I hadn’t really heard anybody say it like that before, but of course that’s right. Information in and of itself should not be seen to be controversial. And I think to the degree that, in our holistic and individualized process, we’re able to say, “What’s the context from which these scores or transcripts or recommendations were produced?”—that’s valuable. Certainly during the pandemic, we started really focusing more deliberately on context for specific high schools. When we see schools saying, “Here’s our mean, here’s our 70 percent range,” when we see scores that exceed the 75th percentile, which is very common in our pool, that’s a proof point. That helps us look at a transcript and say, OK, these scores from that place make sense.

Q: And so that conclusion, while maybe surprising to you, was in line with your experience in the field?

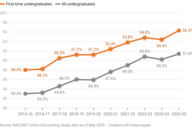

A: Yeah, and it’s evolved over time. In my 13 years at Tufts and the last eight at Dartmouth, what’s happened year by year is the geographic epicenter of our applicant pool is shifting farther and farther away from New England. And that’s exciting. So I look at my Dartmouth total this year, and 65 percent of our applicant pool lives in the U.S. South or West or they’re international. That’s a record high. And as it moves in those different geographic directions, we’re getting applicants from new high schools, and more information is helpful. Most of our students have something close to straight A’s, and that’s wonderful, but also, in and of itself, not a helpful way of sorting. Test scores—looking at public high school profiles from Nevada, for example—are useful.

Q: The big elephant behind everything we’re talking about is last summer’s Supreme Court decision striking down affirmative action. How did that factor into this decision? Did you consider the widespread critiques of testing requirements as barriers to diversity and access?

A: Certainly. But as an admission officer for the last 30 years, it’s been striking to see the differences between different high schools and the way education in the United States is not equal as you move from town to town, never mind state to state. So we’re looking at testing as a reflection of that K-12 disequilibrium. We’re not saying it’s not capturing it, but contextually we’re able to say, “What does this score tell us about the place where it was generated, the neighborhood where the student is?” How do we use them to meet you where you are? As you move across this country, this heterogeneous landscape, it starts to mitigate some of the critique that testing favors the wealthy. It does, but only if you define high and low scores in a strict spectrum. In some places, a 1700 is not high; in some places, it’s lower than the norm. And in other places, it’s remarkably high. And that’s also true for 1200: there are places where that 1200 is unheard-of and others where that 1200 would be at the end of the data distribution.

What I’m hearing from my team and from other admission officers who check in with us is “That’s the way we do it, too.” I don’t think higher ed has done a very elegant job of being clear about this. One of the things I’m committed to doing as we reactivate is imagining new ways of reporting our test profile, giving students more opportunities to see that “Oh, this is a really good score in my context.” I think that’s another post-pandemic opportunity. It’s not just that we paused and unpaused and are pretending it’s just 2019 all over again. We’ve learned things during the last four cycles that have been illuminating, and we’ve baked this contextualized view of testing into our reading. I don’t know if everybody’s done that, but we’ve done it.

Q: The other big thing that’s changed post-pandemic is confidence in current applicants’ academic preparedness: the extent of grade inflation and learning loss. How much did that factor into this decision?

A: It was definitely part of the consideration. The impact of the pandemic on learning is significant and harder to quantify as we keep moving through. But there’s no question that remote learning in high school and middle school had an impact. It’s pretty clear across at least my pool, where we’re meeting students who have very strong grades in high school and where there are lots of students who share that profile. I’m not criticizing the high schools, I’m just acknowledging that the surplus of excellence requires more information to be able to assess it.

Where I work, given our selectivity, it complicates the question of “How do we make informed decisions that require some precision among a pool of high achievers?” Information is valuable, and why would you leave any information on the table? We’re not saying in this reactivation that your test score defines you. We’re not saying we no longer value the whole person or the integrity of a transcript and curriculum; those remain the heart of our process. But having scores as part of that assessment is meaningful to us.

Q: As an admissions veteran, has your thinking on testing—testing requirements, the benefits of standardized exams in general, its prejudices—evolved over time?

A: Yeah, of course. But testing itself has changed a lot. I think all the way back to my early years, when the SAT and ACT were paired with Subject Tests, or Achievement Tests, as they were called farther back. Those are gone. Many high schools would have a class rank, but that’s almost entirely gone away. So you had more data. One by one, those things have been removed. And in many school districts, the aggregate GPA is not reported anymore, either. Over time, as everything else disappears, testing remains, and there’s value there because so many other things have gone.

We’re in a data deficit. And at the same time, the volume has gone up. To use Tufts as an example, when I started there, we had 13,000 applications, I think, maybe 14,000. When I left it was about 20,000, and now it’s closer to 30,000. As the volume goes up, I ask the question: How do you make informed decisions in a big pool given the same number of days to do so?

This is where the critics say, “You don’t know how to do your job.” I know how to do my job, how to read a file. But I need to be able to follow some statistical measurements of academic achievement. Everybody needs to trust admission officers a bit more than they do; that the way we do our work is informed through many lenses, that we’re trying to make the best decisions we can with the information we have. The more heterogeneous the pool has become—and today it’s as wildly diverse as I’ve ever seen, which is exciting—the more we need different ways of understanding those applications.

But this will continue to be a live question for us, long after the media attention fades. Policy evolves, and we’ll pay attention to what the data say. And as the years go on, I’m hoping we’ll be able to make testing a part of our process in a holistic way, redefine what that means in a way that’s transparent and maybe upending some of the conventional wisdom we all hang onto. That feels important; when I’ve said that to high school guidance counselors, they all say that would be great. The worry comes from trying to jam one score into a narrative; what we want to say is “This score is meaningful in its complexity.”