You have /5 articles left.

Sign up for a free account or log in.

Students have trouble understanding general education learning outcomes.

Kar-Tr/iStock/Getty Images Plus

Connecting students’ coursework to their future career is seen as an engagement tool and a necessity for career development. At the Georgia Institute of Technology, gathering data to evaluate how students view their own learning outcomes and how general education courses are targeting those outcomes required creative solutions.

Sarah Wu, the academic assessment manager at the Office of Academic Effectiveness at Georgia Tech, shared the results of a feedback project her team orchestrated at the American Association of Colleges and Universities’ recent conference on general education, pedagogy and assessment.

What’s the sitch: Georgia Tech established its learning outcomes in 2011: communication; quantitative; computing; humanities, fine arts and ethics; natural sciences, math and technology; and social sciences.

As the institution looks to redesign its general education courses and, in turn, their learning outcomes, administrators turned to student perspectives.

Wu has found it challenging to embrace the student perspective in reports or decision-making, something that is reflective of a larger concern in academics.

Survey data from her department often fell short in response rates, making it hard to elevate issues to more senior leadership, she adds.

While some institutions add students to their curriculum review committees, Georgia Tech’s general education subcommittee lacked student representation. Instead, that team used faculty judgement based on student artifacts for outcome-level assessment.

The how to: To better understand students’ perspectives on their general education courses, Wu and her colleagues created three avenues of student feedback.

The first was an online forum discussion about gen ed outcomes created on Microsoft Teams. In this space, students would respond to discussion posts organized by the learning outcomes and interact with each other and researchers.

The second method was a focus group, and the third involved one-on-one interviews followed by a short survey.

Most students opted in to the online discussion, Wu shares, and a total of 66 students participated across mediums.

In the conversations, Wu and her team had a lengthy document of prompts for facilitating discussions. Questions covered measuring the expected outcomes, the measures of execution, identifying if the target was acceptable, how to respond to data and general improvement suggestions.

As incentive to participate, students received free swag from campus partners like T-shirts, notebooks and pens.

The outcomes: From their research, Wu’s team found outcomes needed to be specified better, both in visibility, wording and how it connects to career preparation.

Some students said they had never seen the learning outcomes before. When students did engage with the ideas, the wording was confusing or difficult to understand.

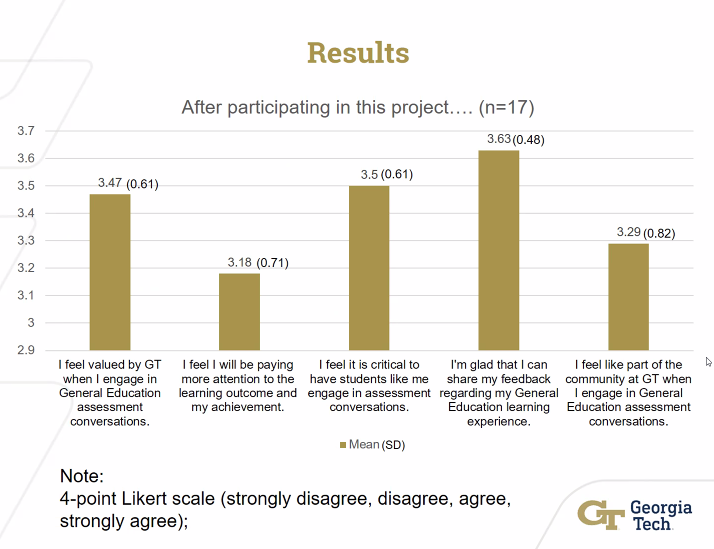

Surveyed students who participated indicated they felt more valued by the institution as a result and they feel it is critical for students to engage in assessment conversations.

What’s under review: Georgia Tech is now looking to improve its communication surrounding outcomes.

Academic leaders will revise the “computing” outcome to reflect the current state of education surrounding the topic, something both faculty and students have asked for.

The university is also going to increase its transfer student support measures, like tutoring, to improve performance among that group.

Another look at student review: Faculty and staff can add student voices and perspectives to other areas of general education assessment, Wu shares. One example is at the course level, students can contribute to improving assignment design and modifying course syllabi to establish more clear outcomes.

“[Students] want to see the impact and want to see improvement,” she shared. “So our students [are] really engaging in improving assignment design and modify the service and at the institutional level.”

Seeking stories from campus leaders, faculty members and staff for our new Student Success focus. Share here.