You have /5 articles left.

Sign up for a free account or log in.

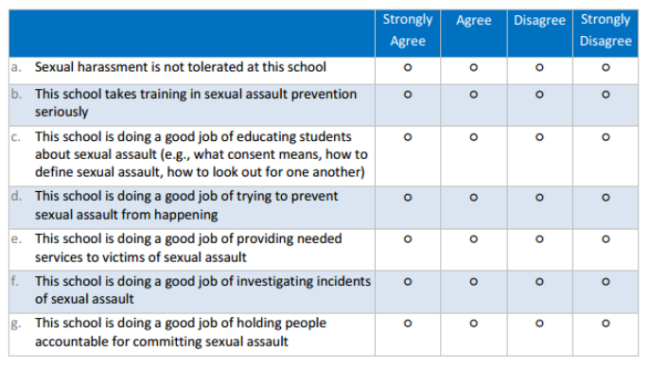

A section from a climate survey produced by the U.S. Department of Justice

DOJ | RTI International

At a news conference in April 2014, Vice President Joe Biden called on colleges to “step up” their efforts combating campus sexual assault.

The request included a specific suggestion: colleges should voluntarily conduct anonymous surveys that gauge the “climate” on their campuses surrounding sexual violence and harassment. “They have a moral responsibility to know what’s happening on their campus,” Biden said.

That July, a bipartisan group of senators introduced legislation that would require all colleges to conduct such surveys; a year earlier the U.S. Department of Education had begun making climate surveys a standard part of its resolution agreements with colleges under investigation for violating Title IX of Education Amendments of 1972.

When the White House suggested institutions begin conducting the surveys, colleges had few tools to turn to, and many balked at being forced to develop the expensive surveys on their own. Today, colleges have plenty of choices. But there’s debate over the merits and shortcomings of each option -- and there's disagreement on what the surveys’ purposes are.

Researchers say climate surveys should be about assessing what can be done to improve the environment on a particular campus. Politicians say the surveys should be about accountability and allowing parents to know how safe a college is before sending their children there.

Individual institutions -- including the University of Kentucky, the University of Michigan and Rutgers University -- have developed surveys. The White House released a prototype survey of its own. The Justice Department has created several surveys, some in partnership with institutions, some with large research firms. Trade organizations and research consortiums have also produced surveys, with some groups offering the tools to institutions for free, while others charge thousands of dollars.

"You want colleges to have a few really good options," said Noël Busch-Armendariz, director of the University of Texas at Austin’s Institute on Domestic Violence & Sexual Assault. "The field of interpersonal violence research has been around for 40 years, and now student activists and the White House have helped propel this national discussion about learning more about who this affects. Researchers have readied the field scientifically, and we have gotten more sophisticated in understanding how to measure these issues. Now a window of opportunity has opened to use that research."

What to Ask?

In November 2014, the Association of American Universities, feeling pressure from Congress and the White House and opposing the idea of legislation on the subject, began developing a survey with Westat for its members to use. The effort proved controversial.

Concerned over the AAU’s promise to only release aggregate data, victims’ advocates said the plan “smack[ed] of institutional protectionism.” In an open letter, a group of 16 scholars who study sexual violence urged AAU members not to sign on to the survey, objecting to the fact that the survey was “proprietary and therefore not available for scientific examination.” Critics also said the process lacked transparency and input from enough scientists who study sexual assaults on campuses.

In the end, 33 of the AAU’s 60 U.S. members decided not to participate in the survey.

David Cantor, vice president at Westat, said much of the criticism was premature. The survey, which cost $85,000 per institution, was not proprietary, Cantor said, as it was posted online for any campus to use. Dartmouth University and Georgetown University, who are not members of the AAU, have both used the survey.

Though the survey had a lower response rate than the researchers had hoped for, Cantor noted that more than 150,000 students participated, making it one of the largest research efforts of its kind. While critics complained that AAU only released aggregate data, every institution that used the survey published its own results online.

"The criticism of the survey was based on partial information," Cantor said. "We thought it was successful."

Once under way at the participating campuses, the language used in the survey also proved to be controversial. The questions asked students if they had ever experienced a number of specific sexual activities without their consent, describing those actions with words and phrases such as “oral sex” and “penetration,” and defining the terms using definitions such as “when a person puts a penis, finger or object inside someone else’s vagina or anus.”

Though the consensus among researchers is that using more specific language in climate surveys results in more accurate data about instances of sexual assault, some students reported that the phrasing made them uncomfortable or even triggered harmful memories of their own assaults.

Despite the complaints, nearly all recent campus climate surveys have used specific language like in the AAU survey, including one developed by RTI International and the U.S. Department of Justice.

“Sexual assault across the board is the most underrated reported crime in the world,” Christopher Krebs, chief research social scientist at RTI, said in a video about the survey. “So the reality is that colleges and universities don’t know much of anything about the magnitude and nature of the problem of sexual assault among their students. We needed to make sure we could develop a methodology that we thought did collect valid data on these concepts, and we do that by using behaviorally specific terminology.”

The Justice Department survey was created by researchers with a long history of studying the issue (Krebs was one researcher behind the 2007 study favored by the White House that suggested one in five women are sexually assaulted while in college). The survey was also first piloted and fine-tuned at nine institutions, with more than 23,000 students responding, before the tool was released for use by the public.

The survey asked questions meant to gauge the prevalence of sexual assault and other kinds of gender violence, the frequency of students reporting the crime to campus officials and law enforcement, and the effects an assault can have on a victim’s life, including on schoolwork and personal relationships.

Sarah Cook, a professor and associate dean at Georgia State University, said surveys should focus both on victimization and on perpetration. Cook is one of the architects behind the ARC3 Campus Climate Survey, developed by a consortium called the Administrator-Researcher Campus Climate Collaborative.

More than 300 colleges have requested to use that survey, according to ARC3, though, because it comes in modules, the consortium is not sure if those who have used the survey are using the full survey or only parts of it. The survey also has the backing and involvement of Mary P. Koss, a professor of public health at the University of Arizona and a pioneering researcher on the prevalence of campus sexual assault.

“It is flexible, so instead of the school having to pick the whole survey, they just have to pick the modules they want,” Koss said. “I think it is unwise and a waste of time for individual schools to construct their own survey or to make significant modifications in those they find.”

‘Feeding Frenzy’

A particular concern that troubled ARC3 researchers was the quickly growing number of surveys that emerged so suddenly following the White House’s announcement in 2014.

One survey criticized by the ARC3 researchers is being created by ATIXA, an arm of the National Center for Higher Education Risk Management. The survey is still being piloted, but it, too, will consist of different modules. ATIXA estimates that when the survey is ready, it will cost $3,500 for colleges to use the full survey, but some of the modules will be free for its members. For $7,500, the firm will administer the survey for an institution. A polished report about the data would cost an institution another $12,500.

In an email exchange last year that was provided to Inside Higher Ed, several faculty members and student affairs officials said they worried that organizations like ATIXA were trying to cash in on the need for such surveys without doing the necessary research. “Title IX coordinators are very tired of the feeding frenzy,” one dean of students wrote.

But Brett Sokolow, the president of NCHERM, noted that the ATIXA survey has now been in development for nearly two years, and that NCHERM has developed other climate surveys for its clients for nearly two decades.

“Refining it is a process, and we're spending a lot of time on getting the language of the questions right,” Sokolow said. "We're not trying to compete with ARC3 or any other surveys. We're trying to figure out what other researchers aren't. Prevalence surveys are fairly useless in the sense that they don't tell us much about what happens. How much is not the most important question. You're not going to change things just knowing that 10 percent of students are being assaulted versus 15 percent. We want to know what the barriers are, and what would increase reporting."

Busch-Armendariz, of the University of Texas at Austin, said there is no single perfect survey for colleges to use. There are, however, surveys that are better than others, she said.

Busch-Armendariz recently finished a study examining 10 climate surveys, including those produced by the AAU, ARC3, the Higher Education Data Sharing Consortium, the University of Chicago, John Hopkins University, the University of Kentucky, the Massachusetts Institute of Technology, the University of Oregon, Rutgers University and the White House. She declined to say which surveys colleges should absolutely avoid, but she was quick to point out her two favorites: the ARC3 survey, which she helped produce, and the survey at Rutgers.

Called #iSPEAK, the Rutgers survey was conducted at the request of the White House Task Force to Protect Students from Sexual Assault. Rutgers’ approach won praise for its mix of traditional questionnaires and focus group interviews.

The survey found that one in five female students at Rutgers had experienced “unwanted sexual contact” since arriving on campus. In May, the University of Michigan reached roughly the same conclusion with its climate survey. At both universities the surveys used a broad definition of sexual assault that included not only incidents involving rape but also unwanted kissing and touching. This has been another point of debate for those creating climate surveys.

When studies by researchers at the University of Kentucky and the Harvard School of Public Health used a narrower definition limited to unwanted or forced penetration, the rate of assault was far lower.

“We know sexual violence means different things to different individuals, so we used a broad definition,” Sarah McMahon, associate director of the Rutgers Center on Violence Against Women and Children, said at the time. “We know all forms of sexual violence are problematic and have serious repercussions.”

In their recommendations to the White House regarding the pilot survey, McMahon and her team stopped short of suggesting that all colleges use the broader definition, instead noting that the phrase “unwanted sexual contact” made it “nearly impossible” for researchers to distinguish among types of sexual violence that differ in severity. “Our recommendation does suggest that we need to have more discussion about how to define and measure sexual violence so that we can compare institutions,” McMahon said.

Comparing institutions is one of the key reasons lawmakers want institutions to conduct climate surveys. The bipartisan legislation first introduced in 2014 would require colleges to not only conduct climate surveys but to publish the results online for prospective students to see. Senator Marco Rubio, a Florida Republican who is a cosponsor of the legislation, said at the time that such information is key for students choosing among colleges.

“Parents and students need to know that the difference in colleges isn’t just in programs or graduation rates, but it’s also in safety,” Rubio said.

Researchers, however, generally warn against using climate surveys to pit one college against another. Christine Lindquist, senior research sociologist at RTI, said having one survey many colleges can use is important "not to call out universities that happen to have high prevalence estimates" but for colleges to be able to learn from one another.

Comparing and ranking colleges "is not particularly helpful," Busch-Armendariz, of the University of Texas, said.

"We like rankings," she said. "But having comparative data like that doesn't do much good, quite frankly. You want colleges to be able to use their surveys to develop programs specific to their campuses. It's about being transparent and being able to be fully informed about the issues. That's the real purpose of having these benchmarks."

![First text message: "Yes?" Second message: "This is embarrassing to say, but law school isn't fair for us men, the women are always outperforming us at [sic]. It's obvious women are taking over the legal profession nowadays." Third text: "Who is this?"](/sites/default/files/styles/image_192_x_128/public/2024-09/Text_messages_law_2.jpg?itok=0QWP419B)