You have /5 articles left.

Sign up for a free account or log in.

Many professors laugh off their reviews at RateMyProfessors -- after all, “hotness,” one of the site’s metrics (connoted by a chili pepper), doesn’t really translate to tenure or promotion. Yet some research suggests that, like it or not, the site’s ratings correlate with ratings professors earn on their institutions’ student evaluations of teaching.

Other research suggests those more formal student evaluations of teaching are unreliable, as well. Yet colleges and universities still use them, often to inform high-stakes personnel decisions.

So a new study of 7.9 million ratings on RateMyProfessors claiming to provide “further insight into student perceptions of academic instruction and possible variables in student evaluations” is at least interesting.

The study, published recently in Assessment and Evaluation in Higher Education, analyzed correlations between RateMyProfessors ratings for quality of instruction, easiness, physical attractiveness, discipline and gender. The millions of evaluations concerned some 190,000 U.S. professors with at least 20 evaluations each.

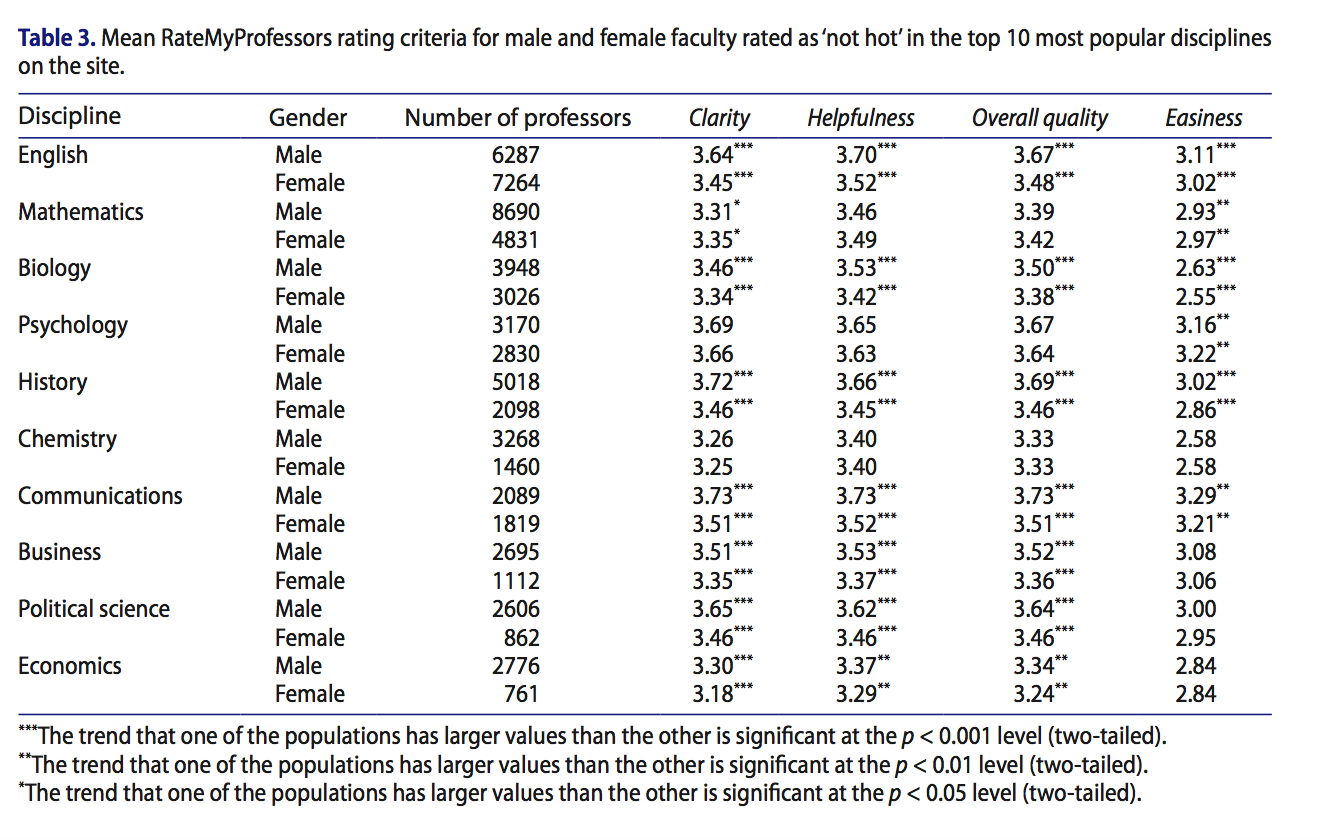

Similar to other studies of student evaluations of teaching, gender played a role, putting women at a disadvantage. Discipline also correlated strongly with perceived ease or difficulty.

Male instructors had overall teaching scores higher than women across most fields. Women instructors did not have higher scores in any discipline, though a few fields, including chemistry, showed no statistical difference. (For gender, the study only considered professors whose names were strongly associated with a particular gender and whom students did not consider "hot." Read on to see why.)

Source: Andrew S. Rosen

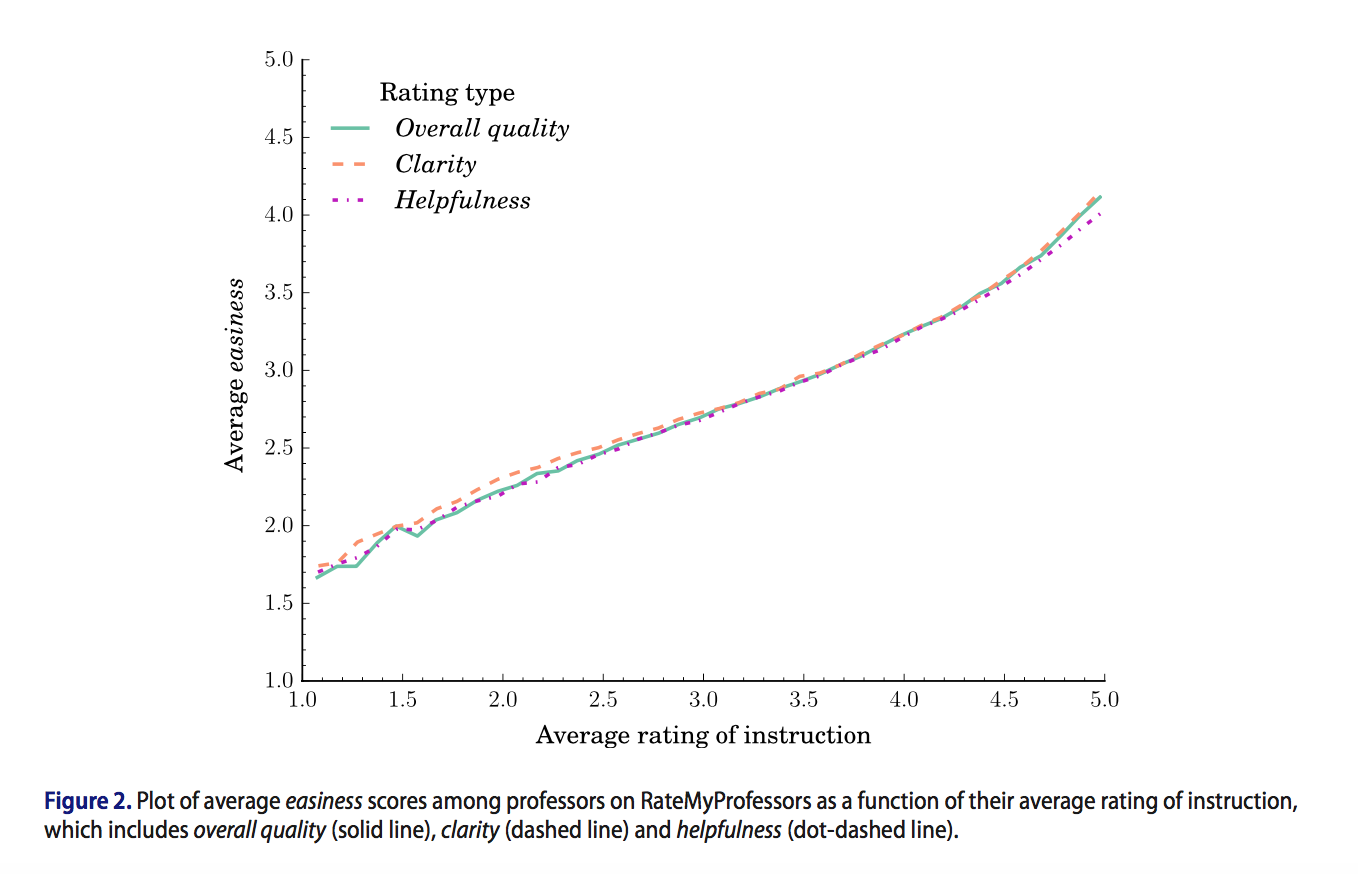

Students also tended to rate professors significantly better teachers if they perceived the courses to be easy. Professors in the sciences, technology, engineering and math fields had lower scores as a whole than those in the humanities and arts.

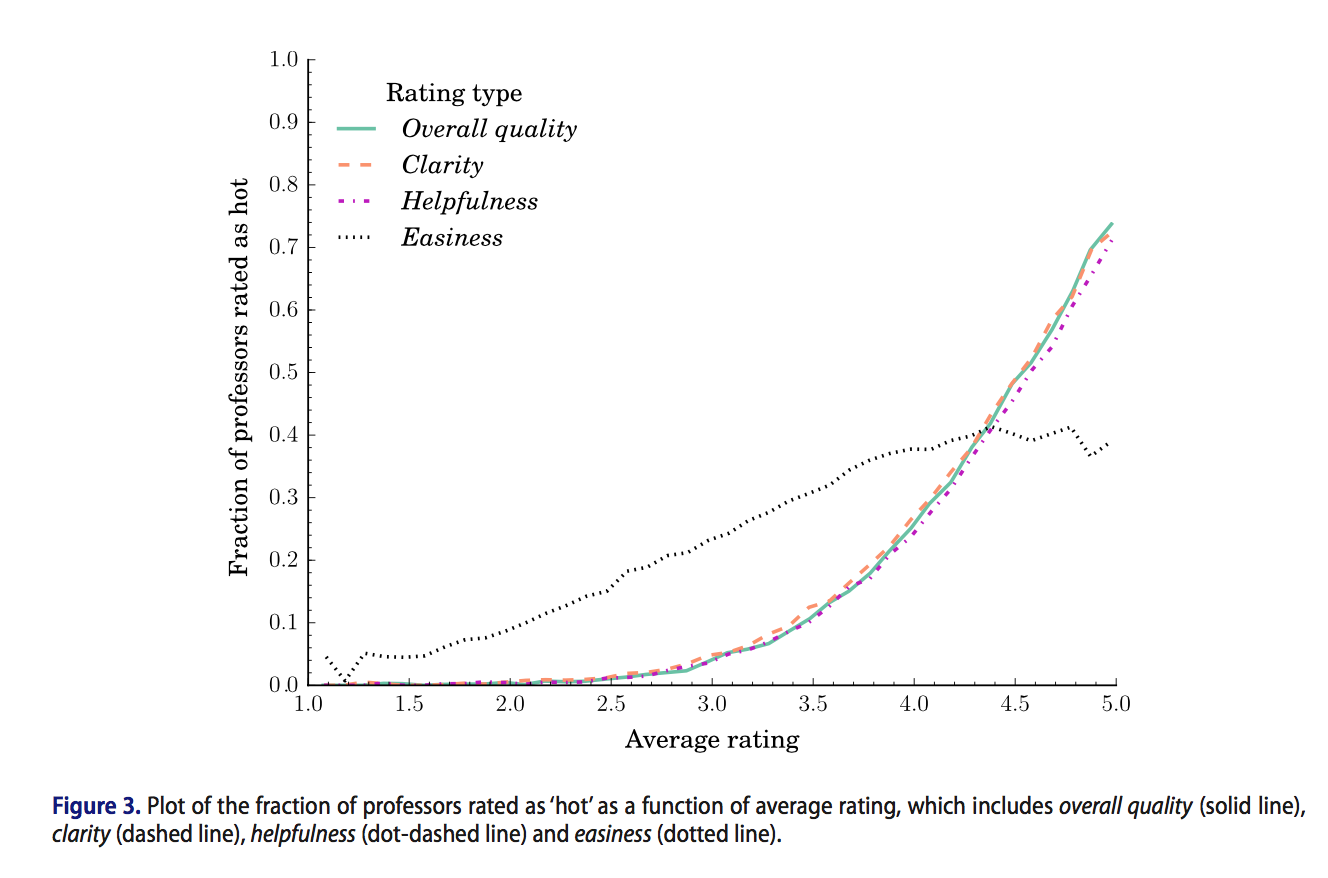

And, sigh, professors rated as attractive also had higher overall teaching scores. That's why the study excluded "hot" professors from its gender analysis.

The study’s author, Andrew S. Rosen, a Ph.D. candidate in chemical engineering at Northwestern University with an interest in computation, said that “even if critics shrug at data from [RateMyProfessors], the biases present on the site are of particular importance,” as they imply that potentially invalid metrics exist in institutional evaluations, too.

Calling student evaluations of teaching something of a “touchy subject,” Rosen said that even if some consider them to be unreliable, “they still have significant importance in both the course selection process for students and the academic promotion process.”

He added, “I don't anticipate this will change any time soon, so studies like this can highlight ways institutional [student evaluations of teaching] can be more critically and accurately evaluated.”